Image filter (Rollup) - chung-leong/zigar GitHub Wiki

In this example we're going to export an image filter to a JavaScript file that we can link into any HTML page.

This example makes use of WebAssembly threads. As support in Zig 0.15.2 is still immature, you'll need to patch the standard library before continuing.

We first initialize the Node project and install the necessary modules:

mkdir filter

cd filter

npm init -y

npm install --save-dev rollup rollup-plugin-zigar @rollup/plugin-terser @rollup/plugin-node-resolve http-server

mkdir src zigDownload

sepia.zig

into the zig sub-directory.

The code in question was translated from a Pixel Bender filter using pb2zig. Consult the intro page for an explanation of how it works.

Instead of exposing a Zig function directly as we've done in the

previous example, we're going employ an intermediate JavaScript file that

isolates the Zig stuff from the consumer of the code. Create the sepia.js in src:

import { createOutput } from '../zig/sepia.zig';

export async function createImageData(src, params) {

const { width, height } = src;

const { dst } = await createOutput(width, height, { src }, params);

return new ImageData(dst.data.clampedArray, width, height);

}The Zig function createOutput() has the follow declaration:

pub fn createOutput(

allocator: std.mem.Allocator,

width: u32,

height: u32,

input: Input,

params: Parameters,

) !Outputallocator is automatically provided by Zigar. We get width and height from the input image,

making the assumption that the output image has the same dimensions. params contains a single

f32: intensity. We get this from the caller along with the input image.

Input is a parameterized type:

pub const Input = KernelInput(u8, kernel);Which expands to:

pub const Input = struct {

src: Image(u8, 4, false);

};Then further to:

pub const Input = struct {

src: struct {

pub const Pixel = @Vector(4, u8);

pub const FPixel = @Vector(4, f32);

pub const channels = 4;

data: []const Pixel,

width: u32,

height: u32,

colorSpace: ColorSpace = .srgb,

offset: usize = 0,

};

};Image was purposely defined in a way so that it is compatible with the browser's

ImageData. Its

data field is []const @Vector(4, u8), a slice pointer that accepts a Uint8ClampedArray

as target without casting. We can therefore simply pass { src } to createOutput as input.

Like Input, Output is a parameterized type. It too can potentially contain multiple images. In

this case (and most cases), there's only one:

pub const Output = struct {

dst: {

pub const Pixel = @Vector(4, u8);

pub const FPixel = @Vector(4, f32);

pub const channels = 4;

data: []Pixel,

width: u32,

height: u32,

colorSpace: ColorSpace = .srgb,

offset: usize = 0,

},

};dst.data points to memory allocated from allocator. Normally, the field would be represented by

a Zigar object on the JavaScript side. In sepia.zig however,

there's a meta-type declaration that changes this:

pub const @"meta(zigar)" = struct {

pub fn isFieldClampedArray(comptime T: type, comptime name: std.meta.FieldEnum(T)) bool {

if (@hasDecl(T, "Pixel")) {

// make field `data` clamped array if output pixel type is u8

if (@typeInfo(T.Pixel).vector.child == u8) {

return name == .data;

}

}

return false;

}

pub fn isFieldTypedArray(comptime T: type, comptime name: std.meta.FieldEnum(T)) bool {

if (@hasDecl(T, "Pixel")) {

// make field `data` typed array (if pixel value is not u8)

return name == .data;

}

return false;

}

pub fn isDeclPlain(comptime T: type, comptime _: std.meta.DeclEnum(T)) bool {

// make return value plain objects

return true;

}

};During the export process, when Zigar encounters a field consisting of u8's, it'll call

isFieldClampedArray() to see if you want it to be an

Uint8ClampedArray.

If the function does not exist or returns false, it'll try isFieldTypedArray() next, which

would make the field a regular

Uint8Array.

Meanwhile, isDeclPlain() makes the output of all export functions plain JavaScript objects. The

end result is that we get something like this from createOutput():

{

dst: {

data: Uint8ClampedArray(60000) [ ... ],

width: 150,

height: 100,

colorSpace: 'srgb'

}

}Which we can then use to create a new image:

return new ImageData(dst.data, width, height);Without this metadata we would need to do this:

return new ImageData(dst.data.clampedArray, width, height);Althought createOutput() is a synchronous function, we need to use await because it's possible

that the Zig module's WebAssembly code wouldn't have been compiled yet. The function would return

a promise in that case.

The last piece of the pizzle is the Rollup configuration file:

import nodeResolve from '@rollup/plugin-node-resolve';

import terser from '@rollup/plugin-terser';

import zigar from 'rollup-plugin-zigar';

export default [

{

input: './src/sepia.js',

plugins: [

zigar({

optimize: 'ReleaseSmall',

embedWASM: true,

topLevelAwait: false,

}),

nodeResolve(),

],

output: {

file: './dist/sepia.js',

format: 'umd',

exports: 'named',

name: 'Sepia',

plugins: [

terser(),

]

},

},

];We set embedWASM to true so end-users of the script would only have to deal with one file.

For output format, we use UMD, allowing our library to both be

imported as a CommonJS module or linked into a HTML page via a <script> tag. Since UMD modules

cannot perform top-level await, we need to set topLevelAwait to false. Zigar normally uses this

feature to pause code execution until WebAssembly code is finished compiling. When it's turned off,

Zig functions will return promises initially.

Because we're using ESM syntax, we need to set type to module in our package.json. We'll use

the occasion to add a couple npm run commands as well:

"type": "module",

"scripts": {

"build": "rollup -c rollup.config.js",

"preview": "http-server ./dist"

},Now we're ready to create the distribution file:

npm run buildTo test the library, create index.html in dist:

<!doctype html>

<html lang="en">

<head>

<meta charset="UTF-8" />

<title>Image filter</title>

</head>

<body>

<p>

<h3>Original</h3>

<img id="srcImage" src="./sample.png">

</p>

<p>

<h3>Result</h3>

<canvas id="dstCanvas"></canvas>

</p>

<p>

Intensity: <input id="intensity" type="range" min="0" max="1" step="0.0001" value="0.3">

</p>

</body>

<script src="./sepia.js"></script>

<script>

const srcImage = document.getElementById('srcImage');

const dstCanvas = document.getElementById('dstCanvas');

const intensity = document.getElementById('intensity');

if (srcImage.complete) {

applyFilter();

} else {

srcImage.onload = applyFilter;

}

intensity.oninput = applyFilter;

async function applyFilter() {

const { naturalWidth: width, naturalHeight: height } = srcImage;

const srcCanvas = document.createElement('CANVAS');

srcCanvas.width = width;

srcCanvas.height = height;

const srcCTX = srcCanvas.getContext('2d');

srcCTX.drawImage(srcImage, 0, 0);

const params = { intensity: parseFloat(intensity.value) };

const srcImageData = srcCTX.getImageData(0, 0, width, height);

const dstImageData = await Sepia.createImageData(srcImageData, params);

dstCanvas.width = width;

dstCanvas.height = height;

const dstCTX = dstCanvas.getContext('2d');

dstCTX.putImageData(dstImageData, 0, 0);

}

</script>

</html>The inline JavaScript code gets the image data from the <img> element, gives it to the function

we'd defined earlier, and draw the output in a

canvas. The logic is pretty

simple. Just basic web programming.

To avoid cross-origin issues we'll serve the file through an HTTP server. Just run the command

we added earlier to package.json:

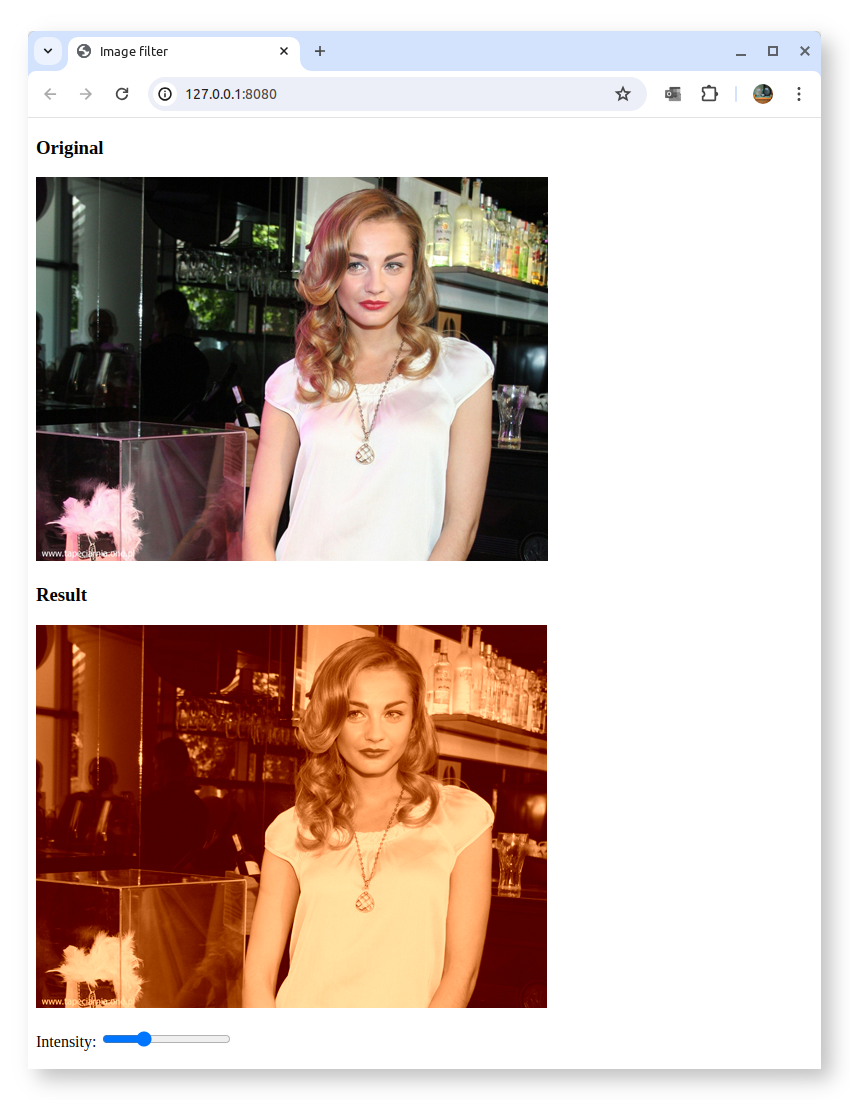

npm run previewOpen the on-screen link and you should see the following:

Modern CPUs typically have more than one core. We can take advantage of the additional computational power by performing data processing in multiple threads. Doing so also means the main thread of the browser won't get blocked, helping to keep the UI responsive.

Multithreading is not enabled by default for WebAssembly. To enable it, add the multithreaded

option in rollup.config.js:

zigar({

optimize: 'ReleaseSmall',

embedWASM: true,

topLevelAwait: false,

multithreaded: true,

}),Then in sepia.js, add two more functions to the import statement:

const { createOutput, createOutputAsync, startThreadPool } = await import(`../zig/${filter}.zig`);Add the code for createImageDataAsync():

let poolStarted = false;

export async function createImageDataAsync(src, params) {

if (!poolStarted) {

startThreadPool(navigator.hardwareConcurrency);

poolStarted = true;

}

const { width, height } = src;

const { dst } = await createOutputAsync(width, height, { src }, params, { signal });

return new ImageData(dst.data.clampedArray, width, height);

}Then change the function being called in index.html:

const dstImageData = await Sepia.createImageDataAsync(srcImageData, params);After rebuilding the code, you'll notice the app no longer works. In the development console you'll find the following message:

Multithreading requires the use of shared memory, a feature available on the browser only when the document is in a secure context. Two HTTP headers must be set.

During development, we can tell http-server to provide them:

"scripts": {

// ...

"preview": "http-server ./dist -H 'Cross-Origin-Opener-Policy: same-origin' -H 'Cross-Origin-Embedder-Policy: require-corp'"

},You must be able to do the same at the web server when the app is eventually deployed in order to make use of multithreading.

After restarting the server, the app will start to work again. The slider won't work very well

though when you drag it. The problem here is that mouse movement could generate very many calls to

createOutputAsync(), far quicker than the computer is able to handle them. We need additional

logic that ensures only the most recent settings received from the UI get worked on. Any unfinished

work triggered by prior changes should simply be abandoned.

Add the following class to the bottom of sepia.js:

class AsyncTaskManager {

activeTask = null;

async call(cb) {

const controller = (cb?.length > 0) ? new AbortController : null;

const promise = this.perform(cb, controller?.signal);

const thisTask = this.activeTask = { controller, promise };

try {

return await thisTask.promise;

} finally {

if (thisTask === this.activeTask) {

this.activeTask = null;

}

}

}

async perform(cb, signal) {

if (this.activeTask) {

this.activeTask.controller?.abort();

await this.activeTask.promise?.catch(() => {});

if (signal?.aborted) {

// throw error now if the operation was aborted before the function is even called

throw new Error('Aborted');

}

}

return cb?.(signal);

}

}

const atm = new AsyncTaskManager();The above above creates an

AbortController and

passes its signal to the callback function. It expects a promise as return value. If

this promise fails to resolve before call() is invoked again, then we abort it and

await its rejection.

In createImageDataAsync(), change the call to createOutputAsync:

const { dst } = await atm.call(signal => createOutputAsync(width, height, { src }, params, { signal }));As an error will get thrown when a call is interrupted, we need to wrap the code of applyFilter()

index.html in a try/catch:

async function applyFilter() {

try {

// ...

const dstImageData = await Sepia.createImageDataAsync(srcImageData, params);

// ...

} catch (err) {

if (err.message !== 'Aborted' ) {

console.error(err);

}

}

}With this mechanism in place preventing excessive calls, the app should work correctly.

Now, let us examine our Zig code. We'll start with startThreadPool():

pub fn startThreadPool(count: u32) !void {

try work_queue.init(.{

.allocator = internal_allocator,

.stack_size = 65536,

.n_jobs = count,

});

}work_queue is a struct containing a thread pool and non-blocking queue. It has the following

declaration:

var work_queue: WorkQueue(thread_ns) = .{};The queue stores requests for function invocation and runs them in separate threads. thread_ns

contains public functions that can be used. For this example we only have one:

const thread_ns = struct {

pub fn processSlice(signal: AbortSignal, width: u32, start: u32, count: u32, input: Input, output: Output, params: Parameters) !Output {

var instance = kernel.create(input, output, params);

if (@hasDecl(@TypeOf(instance), "evaluateDependents")) {

instance.evaluateDependents();

}

const end = start + count;

instance.outputCoord[1] = start;

while (instance.outputCoord[1] < end) : (instance.outputCoord[1] += 1) {

instance.outputCoord[0] = 0;

while (instance.outputCoord[0] < width) : (instance.outputCoord[0] += 1) {

instance.evaluatePixel();

if (signal.on()) return error.Aborted;

}

}

return output;

}

};The logic is pretty straight forward. We initialize an instance of the kernel then loop

through all coordinate pairs, running evaluatePixel() for each of them. After each iteration

we check the abort signal to see if termination has been requested.

createOutputAsync() pushes multiple processSlice call requests into the work queue to

process an image in parellel. Let us first look at its arguments:

pub fn createOutputAsync(allocator: Allocator, promise: Promise, signal: AbortSignal, width: u32, height: u32, input: Input, params: Parameters) !void {Allocator, Promise, and

AbortSignal are special parameters that Zigar provides

automatically. On the JavaScript side, the function has only four required arguments. It will also

accept a fifth argument: options, which may contain an alternate allocator, a callback function,

and an abort signal.

The function starts out by allocating memory for the output struct:

var output: Output = undefined;

// allocate memory for output image

const fields = std.meta.fields(Output);

var allocated: usize = 0;

errdefer inline for (fields, 0..) |field, i| {

if (i < allocated) {

allocator.free(@field(output, field.name).data);

}

};

inline for (fields) |field| {

const ImageT = @TypeOf(@field(output, field.name));

const data = try allocator.alloc(ImageT.Pixel, width * height);

@field(output, field.name) = .{

.data = data,

.width = width,

.height = height,

};

allocated += 1;

}Then it divides the image into multiple slices. It divides the given Promise struct as well:

// add work units to queue

const workers: u32 = @intCast(@max(1, work_queue.thread_count));

const scanlines: u32 = height / workers;

const slices: u32 = if (scanlines > 0) workers else 1;

const multipart_promise = try promise.partition(internal_allocator, slices);partition() creates a new promise

that fulfills the original promise when its resolve() method has been called a certain number of

times. It is used as the output argument for work_queue.push():

var slice_num: u32 = 0;

while (slice_num < slices) : (slice_num += 1) {

const start = scanlines * slice_num;

const count = if (slice_num < slices - 1) scanlines else height - (scanlines * slice_num);

try work_queue.push(thread_ns.processSlice, .{ signal, width, start, count, input, output, params }, multipart_promise);

}

}The first argument to push() is the function to be invoked. The second is a tuple containing

arguments. The third is the output argument. The return value of processSlice(), either the

Output struct or error.Aborted, will be fed to this promise's resolve() method. When the

last slice has been processed, the promise on the JavaScript side becomes fulfilled.

You can find the complete source code for this example here.

A major advantage of using Zig for a task like image processing is that the same code can be deployed both on the browser and on the server. After a user has made some changes to an image on the frontend, the backend can apply the exact same effect using the same code. Consult the Node version of this example to learn how to do it.

The image filter employed for this example is very rudimentary. Check out pb2zig's project page to see more advanced code.

That's it for now. I hope this tutorial is enough to get you started with using Zigar.