2021 01 12 Klines 1 loss function test - WojciechMigda/TruRL GitHub Wiki

Parametry eksperymentu:

Episodes: 100

max_episode_steps: 200

Memory capacity: 100000

GAMMA: 0.70000

NEPOCHS(20)

KBinsDiscretizer({

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{34, -0.020000, 0.020000},

{10, 0.000000, 100.000000},

{10, 0.000000, 100.000000},

{10, 0.000000, 200.000000},})

Scaler({[-5.000000, 5.000000], [0, 10000]})

TsetliniClassifierBitwise({

"threshold": 10000,

"s": 4.000000,

"number_of_regressor_clauses": 3200,

"number_of_states": 127,

"boost_true_positive_feedback": 1,

"random_state": 1,

"n_jobs": 6,

"clause_output_tile_size": 16,

"weighted": true,

"loss_fn": "L1+2",

"loss_fn_C1": 0.100000,

"max_weight": 2147483647,

"verbose": false

})

Gym: <TimeLimit<WavyMarketEnv, gen_fn=07_klines Actions=[<Actions.HOLD: 0>, <Actions.BUY100: 1>, <Actions.SELL100: 2>]>>

Q=±5, T=10k

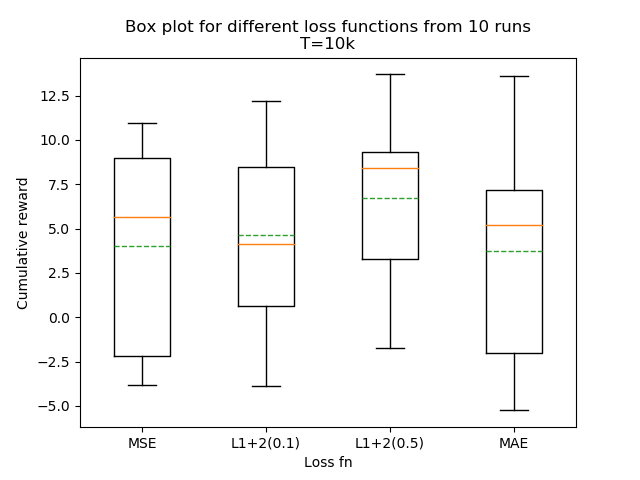

Celem tego eksperymentu było uzyskanie wglądu na ile zmiana loss function może poprawić stabilność samouczenia.

Wyjściowy eksperyment to log #2 z 2021-01-10 Klines initiative 1.

Jak widać, niestety mimo że można mówić o pewnej poprawie (L1+2(0.5)), to cały czas mamy do czynienia z dużym rozrzutem.

Lokalizacja: /experiments/2021-01-12_klines_loss_fn

Uzyte skrypty są wersjonowane w katalogu powyżej.