Home - wom-ai/inference_results_v1.0 GitHub Wiki

closed/NVIDIA only

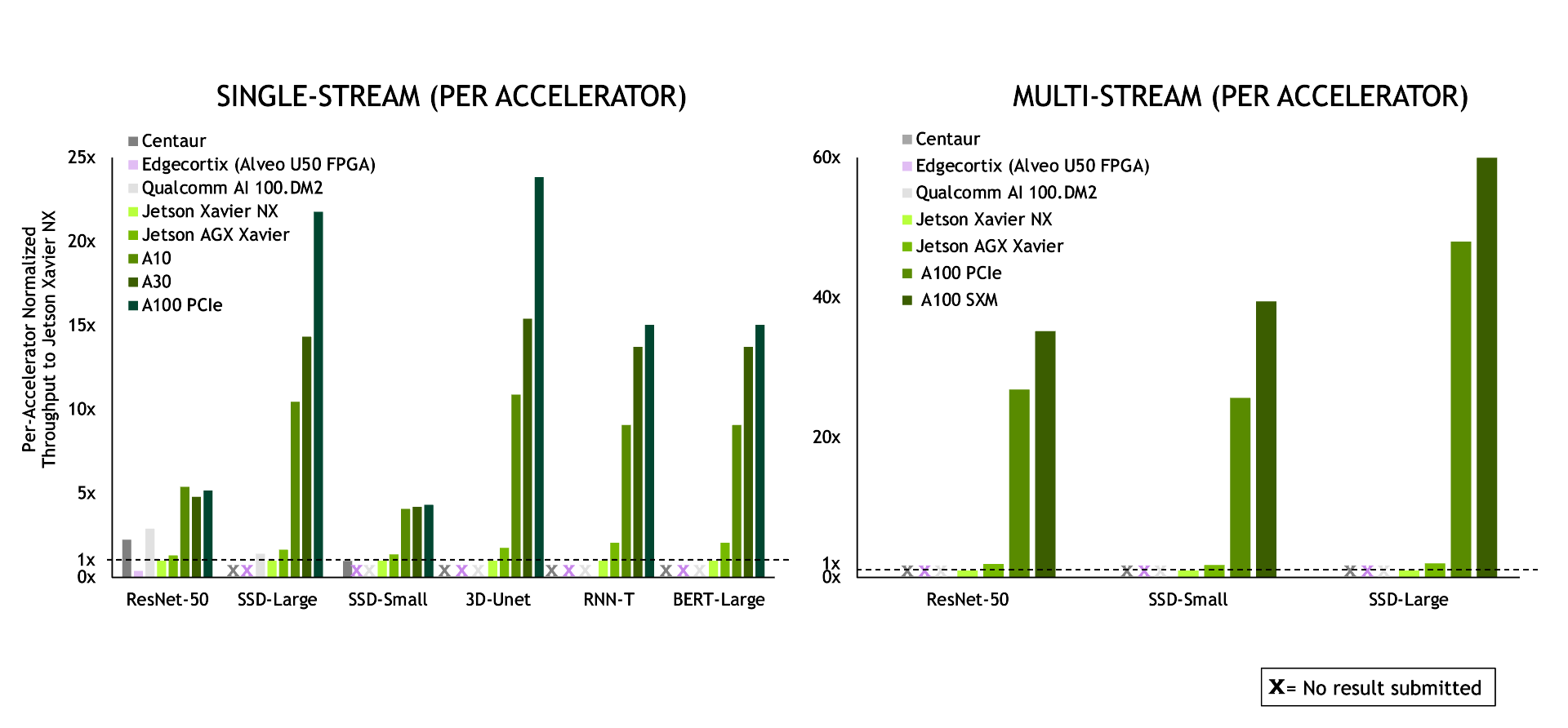

MLPerf5.0 for NVIDIA

- https://mlperf.org/inference-results/

- https://github.com/mlperf/inference_results_v1.0/tree/master/closed/NVIDIA

- Specification

- TRT7.2.3

- tensorflow==1.13.1

- DEVELOPER BLOG: Extending NVIDIA Performance Leadership with MLPerf Inference 1.0 Results

Manual

- set

stereroboybranch$ git checkout stereoboy

Docker

- Base Docker Image

- Edit Makefile

- Build Docker

make build_docker - Make directory for data

mkdir data - Run Docker

docker run --gpus all --rm -it \ -v /home/wom/work:/work \ -v /home/wom/work/inference_results_v1.0/closed/NVIDIA/data:/home \ -w /work/inference_results_v1.0/closed/NVIDIA \ --name test --net=host mlperf-inference:wom-latestdocker run --gpus '"device=0"' --rm -it \ -v /home/rofox/work:/work \ -v /home/rofox/work/mlperf/inference_results_v1.0/closed/NVIDIA/data:/home \ -w /work/mlperf/inference_results_v1.0/closed/NVIDIA \ --name test --net=host mlperf-inference:rofox-latestdocker exec -it test /bin/bash - In Docker

$ make build$ make download_model # Downloads models and saves to $MLPERF_SCRATCH_PATH/models $ make download_data # Downloads datasets and saves to $MLPERF_SCRATCH_PATH/data $ make preprocess_data # Preprocess data and saves to $MLPERF_SCRATCH_PATH/preprocessed_data- generate trt engine

make generate_engines RUN_ARGS="--benchmarks=ssd-mobilenet --scenarios=SingleStream" - harness

make run_harness RUN_ARGS="--benchmarks=ssd-mobilenet --scenarios=SingleStream"

- generate trt engine

Scripts

- generate trt engine

$ bash generate_engines.sh - harness

$ bash run_harness.sh

Platforms

-

Cuda compute capability Table

-

deviceQuerycommand$ apt install cuda-samples-11-1 $ cd /usr/local/cuda/samples $ make $ ./bin/x86_64/linux/release/deviceQuery -

Persistence Mode

NVIDIA Geforce RTX 3080 Laptop GPU wiki

/usr/local/cuda/samples# ./bin/x86_64/linux/release/deviceQuery

./bin/x86_64/linux/release/deviceQuery Starting...

CUDA Device Query (Runtime API) version (CUDART static linking)

Detected 1 CUDA Capable device(s)

Device 0: "NVIDIA GeForce RTX 3080 Laptop GPU"

CUDA Driver Version / Runtime Version 11.4 / 11.1

CUDA Capability Major/Minor version number: 8.6

Total amount of global memory: 7982 MBytes (8370061312 bytes)

(48) Multiprocessors, (128) CUDA Cores/MP: 6144 CUDA Cores

GPU Max Clock rate: 1605 MHz (1.61 GHz)

Memory Clock rate: 7001 Mhz

Memory Bus Width: 256-bit

L2 Cache Size: 4194304 bytes

Maximum Texture Dimension Size (x,y,z) 1D=(131072), 2D=(131072, 65536), 3D=(16384, 16384, 16384)

Maximum Layered 1D Texture Size, (num) layers 1D=(32768), 2048 layers

Maximum Layered 2D Texture Size, (num) layers 2D=(32768, 32768), 2048 layers

Total amount of constant memory: 65536 bytes

Total amount of shared memory per block: 49152 bytes

Total shared memory per multiprocessor: 102400 bytes

Total number of registers available per block: 65536

Warp size: 32

Maximum number of threads per multiprocessor: 1536

Maximum number of threads per block: 1024

Max dimension size of a thread block (x,y,z): (1024, 1024, 64)

Max dimension size of a grid size (x,y,z): (2147483647, 65535, 65535)

Maximum memory pitch: 2147483647 bytes

Texture alignment: 512 bytes

Concurrent copy and kernel execution: Yes with 2 copy engine(s)

Run time limit on kernels: Yes

Integrated GPU sharing Host Memory: No

Support host page-locked memory mapping: Yes

Alignment requirement for Surfaces: Yes

Device has ECC support: Disabled

Device supports Unified Addressing (UVA): Yes

Device supports Managed Memory: Yes

Device supports Compute Preemption: Yes

Supports Cooperative Kernel Launch: Yes

Supports MultiDevice Co-op Kernel Launch: Yes

Device PCI Domain ID / Bus ID / location ID: 0 / 1 / 0

Compute Mode:

< Default (multiple host threads can use ::cudaSetDevice() with device simultaneously) >

deviceQuery, CUDA Driver = CUDART, CUDA Driver Version = 11.4, CUDA Runtime Version = 11.1, NumDevs = 1

Result = PASS