认识Seldon.md - liuxiang/liuxiang.github.io GitHub Wiki

Seldon核心将您的ML模型转换为可用于生产的REST / gRPC微服务。

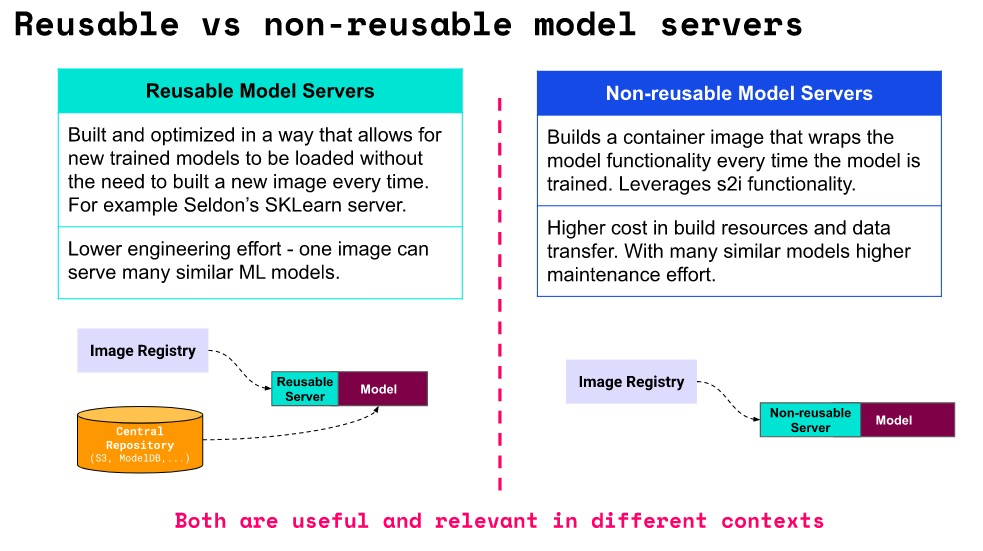

- 可重用和不可重用的模型服务器

- 语言包装器将模型容器化

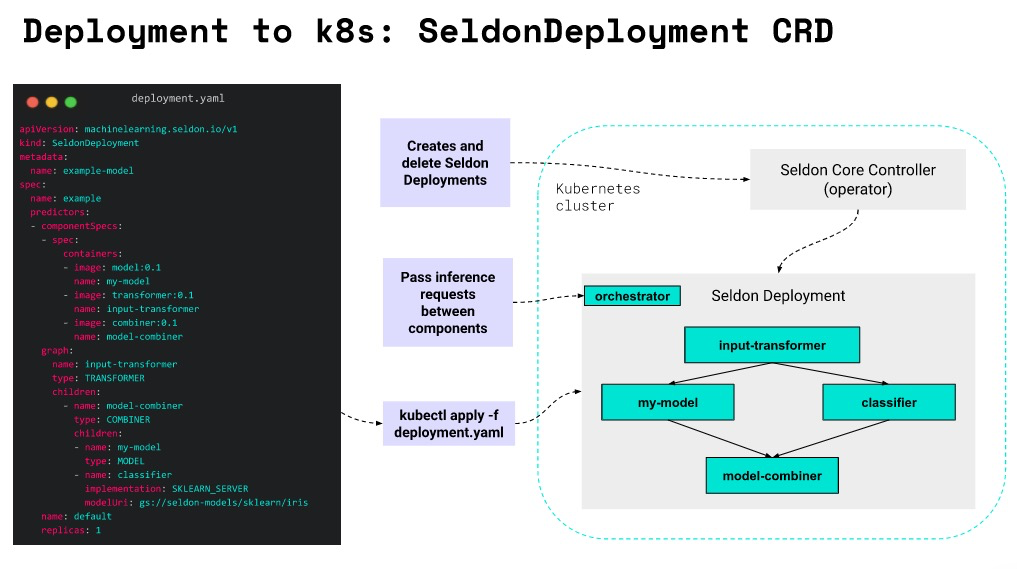

- SeldonDeployment CRD和Seldon-core-operator

- 用于高级推理图的 详见: Seldon核心组件概述

-

数据科学家

model使用最先进的库(mlflow,dvc,xgboost,scikit-learn等)准备ML 。 - 经过训练的模型将上传到中央存储库(例如S3存储)。

-

软件工程师准备使用方法,并将其作为Docker Image上传到Image Registry。

Reusable Model Server - 创建部署清单(CRD)并将其应用于Kubernetes集群。

Seldon Deployment - Seldon Core

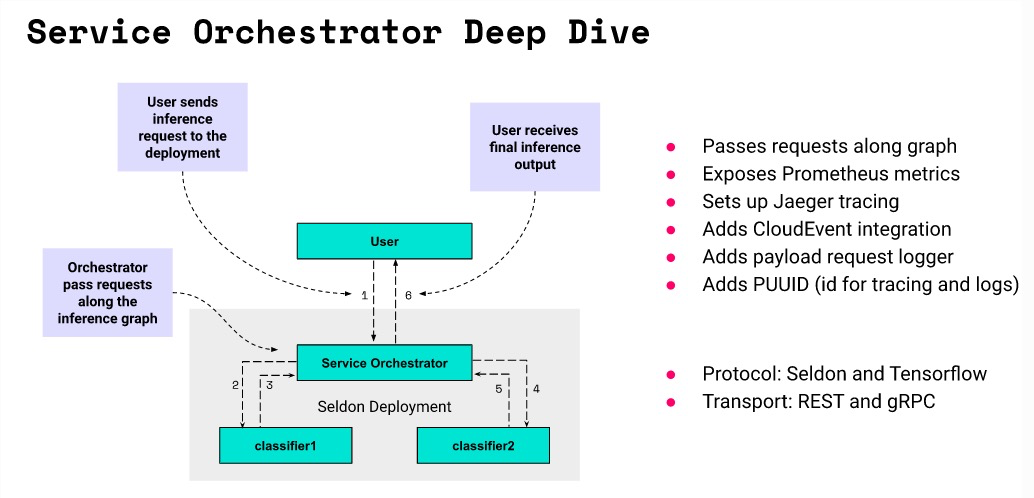

Operator创建所有必需的Kubernetes资源。 - 发送到的推理请求由传递给所有内部模型。

Seldon Deployment,Service Orchestrator - 可以通过利用我们与第三方框架的集成来收集指标和跟踪数据。

- 可重用模型服务器:通常称为预打包模型服务器。允许部署一系列类似模型,而无需每次都构建新服务器。他们通常从中央存储库(例如公司的S3存储)中获取模型.

-

不可重用模型服务器:专用于服务单个模型的专用服务器。不需要中央存储库,但需要为每个模型构建一个新映像。

- 自定义模型镜像 / 自定义推理服务器

deployment.yaml 示例:

implementation: MLFLOW_SERVER | SKLEARN_SERVER | TENSORFLOW_SERVER |XGBOOST_SERVER | CUSTOM_INFERENCE_SERVER(自定义);

apiVersion: machinelearning.seldon.io/v1alpha2

kind: SeldonDeployment

metadata:

name: nlp-model

spec:

predictors:

- graph:

children: []

implementation: CUSTOM_INFERENCE_SERVER | MLFLOW_SERVER | SKLEARN_SERVER | TENSORFLOW_SERVER..

modelUri: s3://our-custom-models/nlp-model

name: model

name: default

replicas: 1官网: https://docs.seldon.io/projects/seldon-core/en/latest/ https://github.com/SeldonIO/seldon-core

-

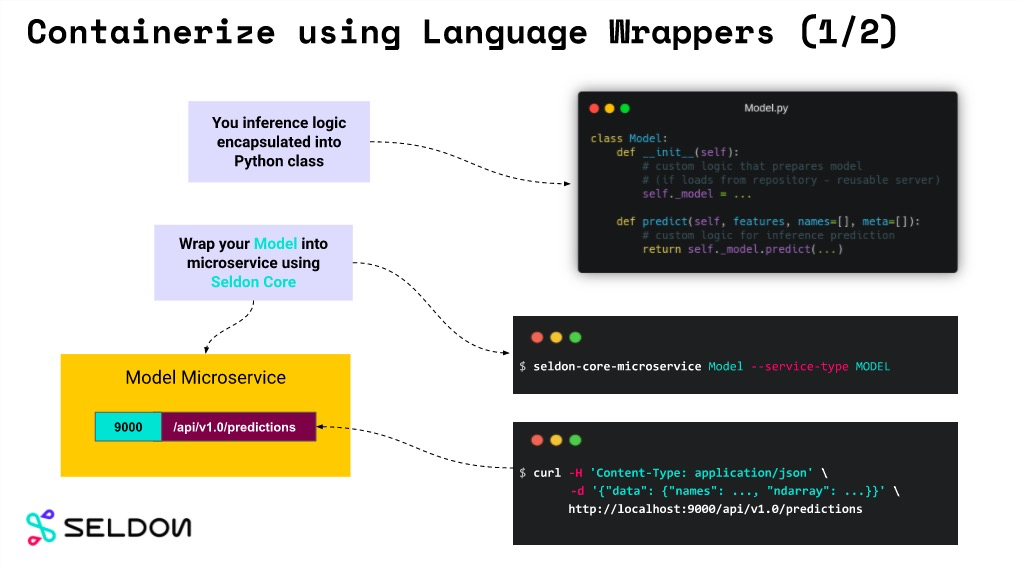

建模人员编写Model.py 实现

__init__和prodict函数class Model: def __init__(self, ...): """Custom logic that prepares model. - Reusable servers: your_loader downloads model from remote repository. - Non-Reusable servers: your_loader loads model from a file embedded in the image. """ self._model = your_loader(...) def predict(self, features, names=[], meta=[]): """Custom inference logic."""" return self._model.predict(...)

-

模型服务器

Reusable与Non-Reusable模型服务器之间的主要区别在于,模型是动态加载的还是嵌入在映像本身中。seldon-core-microservice Model --service-type MODEL (包装成微服务)

$ curl http://localhost:9000/api/v1.0/predictions \ -H 'Content-Type: application/json' \ -d '{"data": {"names": ..., "ndarray": ...}}' { "meta" : {...}, "data" : {"names": ..., "ndarray" : ...} }

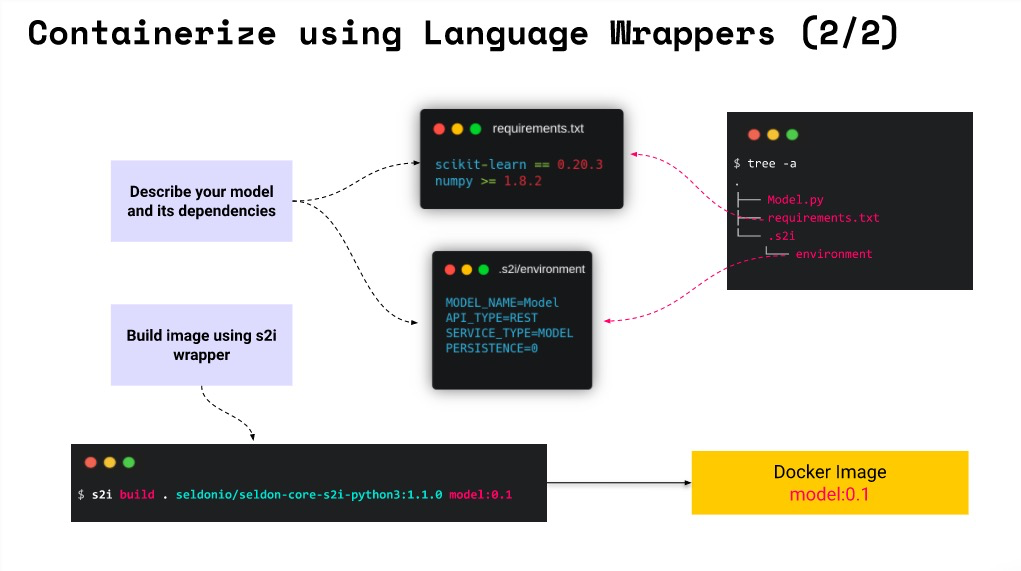

- 编写模型配置

-

requirements.txt描述您的运行时依赖项的文件 -

.s2/environment描述您的微服务的文件(API和模型类型)

-

- 制作为独立镜像:

s2i build . seldonio/seldon-core-s2i-python3:1.1.0 model:0.1- 示例: seldon-core-master/examples/models/sklearn_iris_customdata

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: fixed

spec:

name: fixed

protocol: seldon

transport: rest

predictors:

- componentSpecs:

- spec:

containers:

- image: seldonio/fixed-model:0.1

name: classifier1

- image: seldonio/fixed-model:0.1

name: classifier2

graph:

name: classifier1

type: MODEL

children:

- name: classifier2

type: MODEL

name: default

replicas: 1详见: https://docs.seldon.io/projects/seldon-core/en/latest/graph/svcorch.html

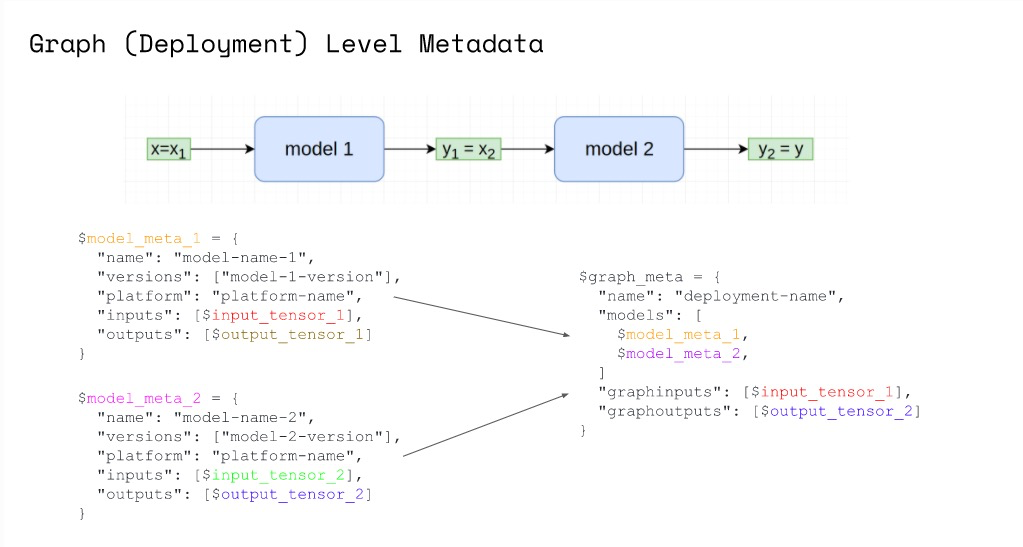

{

"name": "example",

"models": {

"node-one": {

"name": "node-one",

"platform": "seldon",

"versions": ["generic-node/v0.3"],

"inputs": [

{"messagetype": "tensor", "schema": {"names": ["one-input"]}}

],

"outputs": [

{"messagetype": "tensor", "schema": {"names": ["one-output"]}}

],

},

"node-two": {

"name": "node-two",

"platform": "seldon",

"versions": ["generic-node/v0.3"],

"inputs": [

{"messagetype": "tensor", "schema": {"names": ["two-input"]}}

],

"outputs": [

{"messagetype": "tensor", "schema": {"names": ["two-output"]}}

],

}

},

"graphinputs": [

{"messagetype": "tensor", "schema": {"names": ["one-input"]}}

],

"graphoutputs": [

{"messagetype": "tensor", "schema": {"names": ["two-output"]}}

]

}详见: https://docs.seldon.io/projects/seldon-core/en/latest/reference/apis/metadata.html

https://docs.seldon.io/projects/seldon-core/en/latest/reference/apis/metadata.html

-

方式一: metadata.yaml (通用性强)

name: my-model versions: [my-model/v1] platform: platform-name inputs: - messagetype: tensor schema: names: [a, b, c, d] shape: [4] outputs: - messagetype: tensor schema: shape: [ 1 ]

-

方式二:python模型还可以通过实现(Model.py - init_metadata)方法来定义.

class Model: ... def init_metadata(self): meta = { "name": "my-model-name", "versions": ["my-model-version-01"], "platform": "seldon", "inputs": [ { "messagetype": "tensor", "schema": {"names": ["a", "b", "c", "d"], "shape": [4]}, } ], "outputs": [{"messagetype": "tensor", "schema": {"shape": [1]}}], } return meta

-

方式三: yaml中通过环境变量(MODEL_METADATA)覆盖.

apiVersion: machinelearning.seldon.io/v1 kind: SeldonDeployment metadata: name: seldon-model spec: name: test-deployment predictors: - componentSpecs: - spec: containers: - name: my-model image: ... env: - name: MODEL_METADATA value: | --- name: my-model-name versions: [ my-model-version ] platform: seldon inputs: - messagetype: tensor schema: names: [a, b, c, d] shape: [4] outputs: - messagetype: tensor schema: shape: [ 1 ] graph: name: my-model ... name: example replicas: 1

-

接口获取

curl -s http://localhost:8003/seldon/seldon/minio-sklearn/api/v1.0/metadata/classifier | jq . { "inputs": [ { "datatype": "BYTES", "name": "input", "shape": [ 1, 4 ] } ], "name": "iris", "outputs": [ { "datatype": "BYTES", "name": "output", "shape": [ 3 ] } ], "platform": "sklearn", "versions": [ "iris/v1-updated" ] }

Examples for SKlearn: https://docs.seldon.io/projects/seldon-core/en/latest/examples/minio-sklearn.html

{

"parameters":{

// 入参

"features":[

{

"name":"fixed_acidity",

"dtype":"FLOAT",

"ftype":"continuous",

"range":[0,30]

},

{

"name":"volatile_acidity",

"dtype":"FLOAT",

"ftype":"continuous",

"range":[0,30]

},

...

],

// 出参

"targets":[

{

"name":"alcohol_quality",

"dtype":"FLOAT", "ftype":"continuous",

"range":[0,10],

"repeat": 1

}],

// 默认值(独立配置方便用于模型测试)

"defaults":[

12.8, 0.029, 0.48, 0.98, 6.2, 29, 3.33, 1.2, 0.39, 75, 0.66

]

}

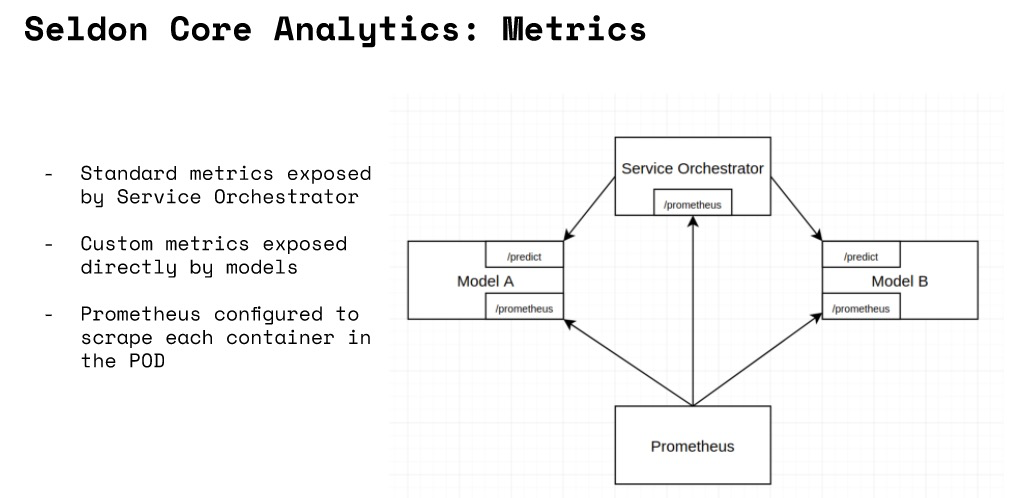

}class Model:

...

def metrics(self):

return [

# a counter which will increase by the given value

{"type": "COUNTER", "key": "mycounter", "value": 1},

# a gauge which will be set to given value

{"type": "GAUGE", "key": "mygauge", "value": 100},

# a timer which will add sum and count metrics - assumed millisecs

{"type": "TIMER", "key": "mytimer", "value": 20.2},

]- 管理端

# 安装分析器

helm install seldon-core-analytics seldon-core-analytics \

--repo https://storage.googleapis.com/seldon-charts \

--namespace seldon-system

# 安装dashboard(grafana or prometheus)

kubectl port-forward svc/seldon-core-analytics-grafana 3000:80 -n seldon-system

OPEN http://localhost:3000/dashboard/db/prediction-analytics

或

kubectl port-forward svc/seldon-core-analytics-prometheus-seldon 3001:80 -n seldon-system

OPEN http://localhost:3001/详见: https://docs.seldon.io/projects/seldon-core/en/latest/analytics/analytics.html 问题1: 模型输出的监控是否需要写给采集方(如:infuxDB).还是管理方自行接口采集(prometheus)?

目前理解是prometheus主动采集,模型服务未见度量服务的主机配置.

问题2: prometheus服务端如何知道哪些机器需要度量采集?

Prediction Requests

seldon_api_executor_server_requests_seconds_(bucket,count,sum): Requests to the service orchestrator from an ingress, e.g. API gateway or Ambassadorseldon_api_executor_client_requests_seconds_(bucket,count,sum): Requests from the service orchestrator to a component, e.g., a model可通过k8s信息筛选.详见:prometheus.yml - kubernetes_sd_configs配置

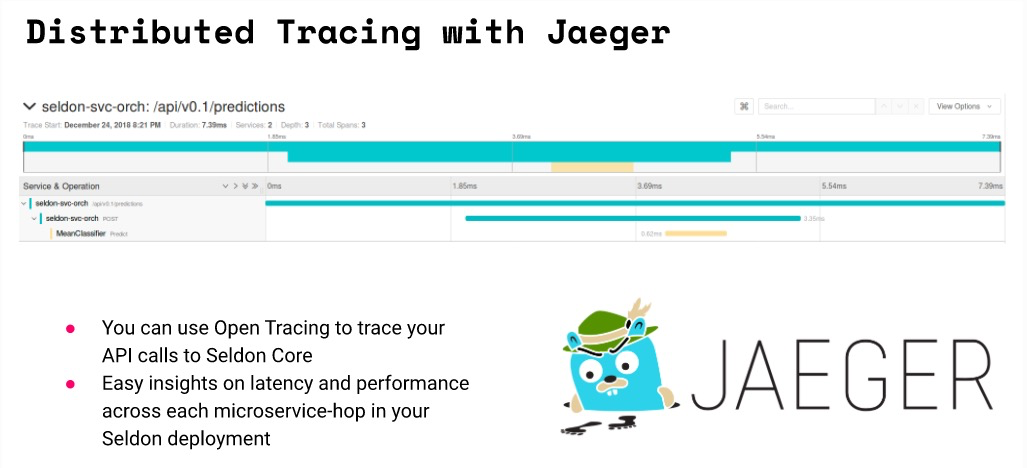

您可以使用Open Tracing来跟踪对Seldon Core的API调用。默认情况下,我们支持Jaeger的分布式跟踪,这将使您能够了解Seldon部署中每个微服务跳的延迟和性能。

详见: https://docs.seldon.io/projects/seldon-core/en/latest/graph/distributed-tracing.html

服务协调器是一个组件,已添加到您的推理图中以:

- 正确管理推理图描述的请求/响应路径

- 公开普罗米修斯指标

- 通过开放式跟踪提供跟踪

- 添加基于CloudEvent的有效负载日志记录

当前的服务协调器是GoLang实现。Seldon Core的1.2版本不推荐使用以前的Java实现。

在Seldon Core的1.1+版本中,您可以为推理图的数据平面指定协议和传输方式。目前,我们允许以下组合:

- 协议:Seldon,Tensorflow

- 传输:REST,gRPC

kubectl create namespace seldon-system

helm install seldon-core seldon-core-operator \

--repo https://storage.googleapis.com/seldon-charts \

--set usageMetrics.enabled=true \

--namespace seldon-system- kubectl apply 部署模型服务

$ kubectl apply -f - << END

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: iris-model

namespace: seldon

spec:

name: iris

predictors:

- graph:

implementation: SKLEARN_SERVER

modelUri: gs://seldon-models/sklearn/iris

name: classifier

name: default

replicas: 1

END- 测试

# 结构 http://<ingress_url>/seldon/<namespace>/<model-name>/api/v1.0/doc/

$ curl -X POST http://<ingress>/seldon/seldon/iris-model/api/v1.0/predictions \

-H 'Content-Type: application/json' \

-d '{ "data": { "ndarray": [[1,2,3,4]] } }'

{

"meta" : {},

"data" : {

"names" : [

"t:0",

"t:1",

"t:2"

],

"ndarray" : [

[

0.000698519453116284,

0.00366803903943576,

0.995633441507448

]

]

}

}- Model.py

import pickle

class Model:

def __init__(self):

self._model = pickle.loads( open("model.pickle", "rb") )

def predict(self, X):

output = self._model(X)

return output- 制作成镜像: sklearn_iris:0.1

s2i build . seldonio/seldon-core-s2i-python3:0.18 sklearn_iris:0.1- [可重用镜像]不直接制作为镜像. 而是将

Model.py在启动时挂载到通用镜像. 详见: Seldon制作模型镜像.md

- [可重用镜像]不直接制作为镜像. 而是将

- 部署到k8s

$ kubectl apply -f - << END

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: iris-model

namespace: model-namespace

spec:

name: iris

predictors:

- componentSpecs:

- spec:

containers:

- name: classifier

image: sklearn_iris:0.1

- graph:

name: classifier

name: default

replicas: 1

END- 测试效果

$ curl -X POST http://<ingress>/seldon/model-namespace/iris-model/api/v1.0/predictions \

-H 'Content-Type: application/json' \

-d '{ "data": { "ndarray": [1,2,3,4] } }' | json_pp

{

"meta" : {},

"data" : {

"names" : [

"t:0",

"t:1",

"t:2"

],

"ndarray" : [

[

0.000698519453116284,

0.00366803903943576,

0.995633441507448

]

]

}

}预打包的推理服务器示例

- 部署Scikit学习模型二进制文件

- 部署Tensorflow导出模型

- MLflow预打包的Model Server A / B测试

- 部署XGBoost模型二进制文件

- 使用集群的MinIO部署预打包的Model Server

Python语言包装器示例

使用Seldon Core进行批处理

MLOps:缩放和监视以及可观察性

- 自动缩放示例

- 使用ELK请求有效负载记录

- 使用Grafana和Prometheus的自定义指标

- 使用Jaeger进行分布式跟踪

- Jenkins Classic的CI / CD

- Jenkins X的CI / CD

- 副本控制

(详见: https://docs.seldon.io/projects/seldon-core/en/latest/examples/notebooks.html)

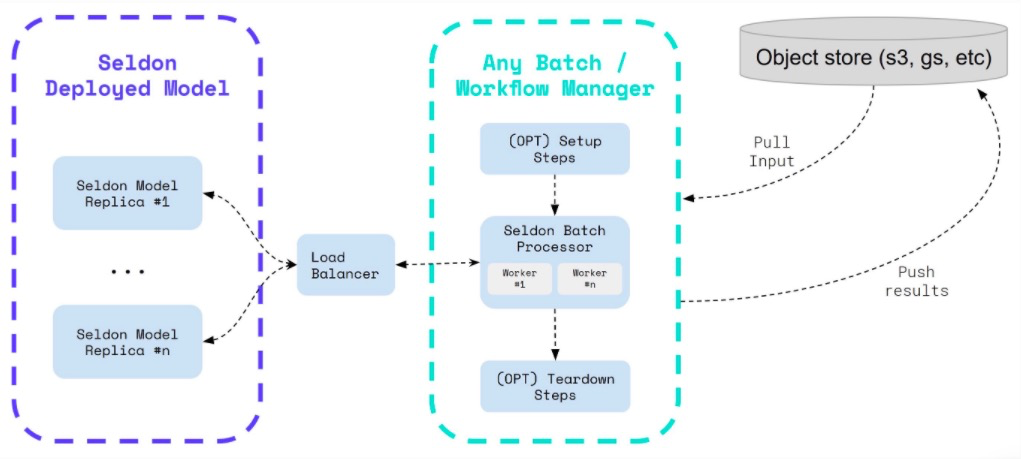

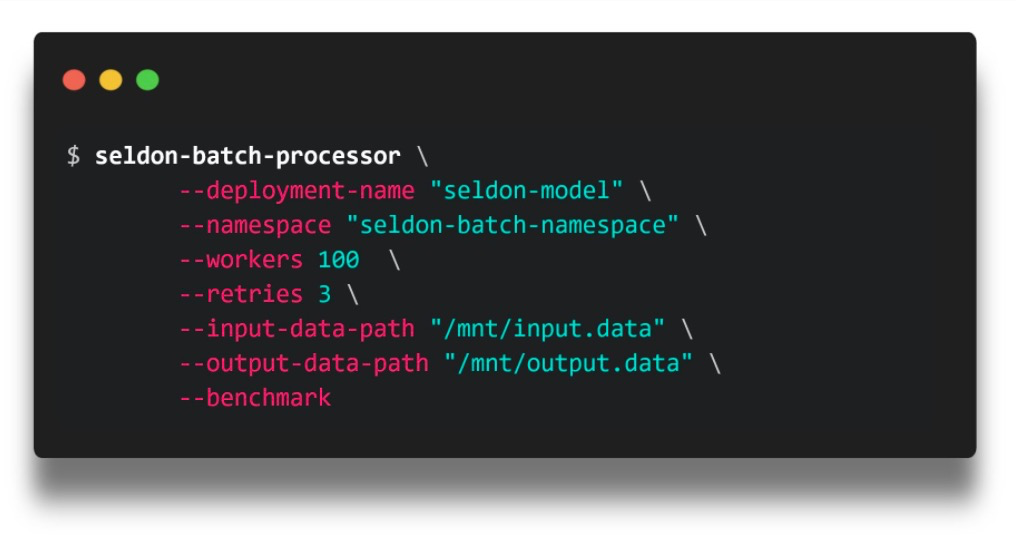

计划进行的触发器(例如每天一次,每月一次等)或可以以编程方式触发的作业 https://docs.seldon.io/projects/seldon-core/en/latest/servers/batch.html

$ seldon-batch-processor \

--deployment-name "{{workflow.name}}" \

--namespace "seldon-batch-namespace" \

--workers 100 \

--retries 3 \

--input-data-path "/assets/input-data.txt" \

--output-data-path "/assets/output-data.txt" \

--benchmark

- 与ETL和工作流管理器集成

问题:

-

seldon-batch-processor如何从ETL提取数据? 如何与workflow manager集成?

未了解到

-

seldon-batch-processor是否服务化存在? 是否有API提供?

貌似没有

- 批处理日志如何采集(用于评估分析)?

未了解到

https://docs.seldon.io/projects/seldon-core/en/latest/analytics/cicd-mlops.html

https://docs.seldon.io/projects/seldon-core/en/latest/graph/scaling.html

https://docs.seldon.io/projects/seldon-core/en/latest/ingress/istio.html

apiVersion: networking.istio.io/v1alpha3

kind: Gateway

metadata:

name: seldon-gateway

namespace: istio-system

spec:

selector:

istio: ingressgateway # use istio default controller

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- "*"流量路由

- 金丝雀更新

- 蓝绿色部署

- A / B测试

- 影子部署

https://docs.seldon.io/projects/seldon-core/en/latest/workflow/troubleshooting.html

https://docs.seldon.io/projects/seldon-core/en/latest/reference/helm.html

# # Seldon Core Operator

# Below are the default values when installing Seldon Core

# ## Ingress Options

# You are able to choose between Istio and Ambassador

# If you have ambassador installed you can just use the enabled flag

ambassador:

enabled: true

singleNamespace: false

# When activating Istio, respecive virtual services will be created

# You must make sure you create the seldon-gateway as well

istio:

enabled: false

gateway: istio-system/seldon-gateway

tlsMode: ""

# ## Install with Cert Manager

# See installation page in documentation for more information

certManager:

enabled: false

# ## Install with limited namespace visibility

# If you want to ensure seldon-core-controller can only have visibility

# to specifci namespaces you can set the controllerId

controllerId: ""

# Whether operator should create the webhooks and configmap on startup (false means created from chart)

managerCreateResources: false

# Default user id to add to all Pod Security Context as the default

# Use this to ensure all container run as non-root by default

# For openshift leave blank as usually this will be injected automatically on an openshift cluster

# to all pods.

defaultUserID: "8888"

# ## Service Orchestrator (Executor)

# The executor is the default service orchestrator which has superceeded the "Java Engine"

executor:

enabled: true

port: 8000

metricsPortName: metrics

image:

pullPolicy: IfNotPresent

registry: docker.io

repository: seldonio/seldon-core-executor

tag: 1.2.2-dev

prometheus:

path: /prometheus

serviceAccount:

name: default

user: 8888

# If you want to make available your own request logger for ELK integration you can set this

# For more information see the Production Integration for Payload Request Logging with ELK in the docs

requestLogger:

defaultEndpoint: 'http://default-broker'

# ## Seldon Core Controller Manager Options

image:

pullPolicy: IfNotPresent

registry: docker.io

repository: seldonio/seldon-core-operator

tag: 1.2.2-dev

manager:

cpuLimit: 500m

cpuRequest: 100m

memoryLimit: 300Mi

memoryRequest: 200Mi

rbac:

configmap:

create: true

create: true

serviceAccount:

create: true

name: seldon-manager

singleNamespace: false

storageInitializer:

cpuLimit: "1"

cpuRequest: 100m

image: gcr.io/kfserving/storage-initializer:0.2.2

memoryLimit: 1Gi

memoryRequest: 100Mi

usageMetrics:

enabled: false

webhook:

port: 443

# ## Predictive Unit Values

predictiveUnit:

port: 9000

metricsPortName: metrics

# If you would like to add extra environment variables to the init container to make available

# secrets such as cloud credentials, you can provide a default secret name that will be loaded

# to all the containers. You can then override this using the envSecretRefName in SeldonDeployments

defaultEnvSecretRefName: ""

predictor_servers:

MLFLOW_SERVER:

grpc:

defaultImageVersion: "1.2.2-dev"

image: seldonio/mlflowserver_grpc

rest:

defaultImageVersion: "1.2.2-dev"

image: seldonio/mlflowserver_rest

SKLEARN_SERVER:

grpc:

defaultImageVersion: "1.2.2-dev"

image: seldonio/sklearnserver_grpc

rest:

defaultImageVersion: "1.2.2-dev"

image: seldonio/sklearnserver_rest

TENSORFLOW_SERVER:

grpc:

defaultImageVersion: "1.2.2-dev"

image: seldonio/tfserving-proxy_grpc

rest:

defaultImageVersion: "1.2.2-dev"

image: seldonio/tfserving-proxy_rest

tensorflow: true

tfImage: tensorflow/serving:2.1.0

XGBOOST_SERVER:

grpc:

defaultImageVersion: "1.2.2-dev"

image: seldonio/xgboostserver_grpc

rest:

defaultImageVersion: "1.2.2-dev"

image: seldonio/xgboostserver_rest

# ## Other

# You can choose the crds to not be installed if you already installed them

# This applies to just the yaml template. If you set managerCreateResources=true then

# it will try to create the CRD but only if it does not exist

crd:

create: true

# Warning: credentials will be depricated soon, please use defaultEnvSecretRefName above

# For more info please check the documentation

credentials:

gcs:

gcsCredentialFileName: gcloud-application-credentials.json

s3:

s3AccessKeyIDName: awsAccessKeyID

s3SecretAccessKeyName: awsSecretAccessKey

kubeflow: false

# ## Engine parameters

# Warning: Engine is being depricated in favour of Orchestrator

# FOr more information please read the Upgrading section in the documentation

engine:

grpc:

port: 5001

image:

pullPolicy: IfNotPresent

registry: docker.io

repository: seldonio/engine

tag: 1.2.2-dev

logMessagesExternally: false

port: 8000

prometheus:

path: /prometheus

serviceAccount:

name: default

user: 8888

# Explainer image

explainer:

image: seldonio/alibiexplainer:1.2.2-devLatest Seldon Images Core images

| Description | Image URL | Stable Version | Development |

|---|---|---|---|

| Seldon Operator | seldonio/seldon-core-operator | 1.2.1 | 1.2.2-rc |

| Seldon Service Orchestrator (Go) | seldonio/seldon-core-executor | 1.2.1 | 1.2.2-rc |

| Seldon Service Orchestrator (Java) | seldonio/engine | 1.2.1 | 1.2.2-rc |

Pre-packaged servers

| Description | Image URL | Version |

|---|---|---|

| MLFlow Server REST | seldonio/mlflowserver_rest | 1.2.1 |

| MLFlow Server GRPC | seldonio/mlflowserver_grpc | 1.2.1 |

| SKLearn Server REST | seldonio/sklearnserver_rest | 1.2.1 |

| SKLearn Server GRPC | seldonio/sklearnserver_grpc | 1.2.1 |

| XGBoost Server REST | seldonio/xgboostserver_rest | 1.2.1 |

| XGBoost Server GRPC | seldonio/xgboostserver_grpc | 1.2.1 |

Language wrappers

| Description | Image URL | Stable Version | Development |

|---|---|---|---|

| Seldon Python 3 (3.6) Wrapper for S2I | seldonio/seldon-core-s2i-python3 | 1.2.1 | 1.2.2-rc |

| Seldon Python 3.6 Wrapper for S2I | seldonio/seldon-core-s2i-python36 | 1.2.1 | 1.2.2-rc |

| Seldon Python 3.7 Wrapper for S2I | seldonio/seldon-core-s2i-python37 | 1.2.1 | 1.2.2-rc |

Server proxies

| Description | Image URL | Stable Version |

|---|---|---|

| NVIDIA inference server proxy | seldonio/nvidia-inference-server-proxy | 0.1 |

| SageMaker proxy | seldonio/sagemaker-proxy | 0.1 |

| Tensorflow Serving REST proxy | seldonio/tfserving-proxy_rest | 0.7 |

| Tensorflow Serving GRPC proxy | seldonio/tfserving-proxy_grpc | 0.7 |

Python modules

| Description | Python Version | Version |

|---|---|---|

| seldon-core | >3.4,<3.7 | 1.2.1 |

| seldon-core | 2,>=3,<3.7 | 0.2.6 (deprecated) |

Incubating Language wrappers

| Description | Image URL | Stable Version | Development |

|---|---|---|---|

| Seldon Python ONNX Wrapper for S2I | seldonio/seldon-core-s2i-python3-ngraph-onnx | 0.3 | |

| Seldon Java Build Wrapper for S2I | seldonio/seldon-core-s2i-java-build | 0.1 | |

| Seldon Java Runtime Wrapper for S2I | seldonio/seldon-core-s2i-java-runtime | 0.1 | |

| Seldon R Wrapper for S2I | seldonio/seldon-core-s2i-r | 0.2 | |

| Seldon NodeJS Wrapper for S2I | seldonio/seldon-core-s2i-nodejs | 0.1 | 0.2-SNAPSHOT |

Java packages

| Description | Package | Version |

|---|---|---|

| Seldon Core Wrapper | seldon-core-wrapper | 0.1.5 |

| Seldon Core JPMML | seldon-core-jpmml | 0.0.1 |

Deprecated Language wrappers

| Description | Image URL | Stable Version | Development |

|---|---|---|---|

| Seldon Python 2 Wrapper for S2I | seldonio/seldon-core-s2i-python2 | 0.5.1 | deprecated |