docker, cluster, mlops - feliyur/exercises GitHub Wiki

docker run hello-world |

sanity check |

docker ps [-a] |

list containers |

docker image ls |

list images |

docker run -it <image-name>:<tag> [--name container-name] |

Run command prompt withing an image, optionally giving it a name (for later reference). |

docker commit <commitid> <newimagename> |

Where commit id can be taken from docker ps

|

docker start <container name> && docker exec -it <container name> <command> |

Restart and run a command within a container |

docker cp container:src_path|host_src_path container:dest_path|host_dest_path |

Copy files b/w host and containers |

| Command | Use | Additional Notes |

|---|---|---|

docker context ls |

List all Docker contexts | Shows name, type, endpoints, and active context |

docker context use <name> |

Set the active Docker context | Switches Docker to use the specified context |

docker context show |

Display current context | Prints the name of the currently active context |

docker context inspect <name> |

View details of a specific context | Outputs detailed config including endpoints and TLS |

docker context create <name> |

Create a new Docker context | Use --docker to specify host, TLS options, etc. |

docker context rm <name> |

Remove a Docker context | Cannot remove the currently active context |

| Command | Action | Additional Notes |

|---|---|---|

docker compose up [-d] [--build] [--force-recreate] [-V] |

Starts all services |

-d for detached mode, --build forces build images. --force-recreate forces recreate containers. |

docker compose down [-v] |

Stops and removes all services. -v removes volumes. --rmi all removes images. |

Also removes networks defined in the file |

docker compose stop |

Stops running containers | Containers can be restarted |

docker-compose [--env-file <path>.env] [--project-directory <directory> -f docker-compose.yml up --force-recreate -V -d <service name> |

Restart service recreating docker container. -V flag is necessary to also recreate the container storage. |

https://askubuntu.com/questions/477551/how-can-i-use-docker-without-sudo

# Add the docker group if it doesn't already exist:

sudo groupadd docker

# Add the connected user "$USER" to the docker group. Change the user name to match

# your preferred user if you do not want to use your current user:

sudo gpasswd -a $USER dockerEither do a newgrp docker or log out/in to activate the changes to groups.

docker run hello-worldto check if can run docker without sudo.

http://blog.fx.lv/2017/08/running-gui-apps-in-docker-containers-using-vnc/

Copying here for the case that the above page becomes unavailable:

- Run the container exposing VNC port to host

docker run -p 5901:5901 -t -i ubuntu- Install VNC server within the container and run it

sudo apt-get install vnc4server

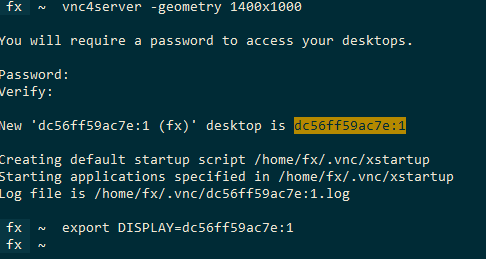

vnc4server -geometry 1400x1000- Export

$DISPLAYenvironment variable:

export DISPLAY=<change this to the right DISPLAY name from vnc server output, see screenshot below>-

Run GUI app

-

Connect using VNC to the exported port (

127.0.0.1:5901in the above snippet).

Taken from here.

First - make sure that nvidia driver is installed and recognizes the gpu (e.g. by running nvidia-smi).

$ distribution=$(. /etc/os-release;echo $ID$VERSION_ID)

# NOTE: apt-key is deprecated and will produce a warning as of Ubuntu 22.04. Will need to modify this to use gpg command instead

$ curl -s -L https://nvidia.github.io/nvidia-docker/gpgkey | sudo apt-key add -

$ curl -s -L https://nvidia.github.io/nvidia-docker/$distribution/nvidia-docker.list | sudo tee /etc/apt/sources.list.d/nvidia-docker.listInstall nvidia-docker2:

apt-get update

apt-get install -y nvidia-docker2

sudo systemctl restart docker

Run a base image

docker run -it --gpus all nvidia/cuda:11.0.3-base-ubuntu20.04 nvidia-smi

Image alternatives:

- base: minimal option with essential cuda runtime

- runtime: more fully-featured option that includes the CUDA math libraries and NCCL for cross-GPU communication

- devel: everything from runtime as well as headers and development tools for creating custom CUDA images

Can then use the image as the base in the dockerfile

FROM nvidia/cuda:11.4.0-base-ubuntu20.04

RUN apt update

RUN apt-get install -y python3 python3-pip

RUN pip install tensorflow-gpu

COPY tensor-code.py .

ENTRYPONT ["python3", "tensor-code.py"]

If need to use a different base, can manually add cuda support, see link above / https://stackoverflow.com/questions/25185405/using-gpu-from-a-docker-container/64422438#64422438

Setting up a server (using docker): https://clear.ml/docs/latest/docs/deploying_clearml/clearml_server_linux_mac + bringing it up.

Server starts on http://localhost:8080. Go to the profile page (right top button or http://localhost:8080/profile) ==> add credentials ==> copy as input into clearml-init (below).

Locally:

pip install clearml

clearml-init

Using ollama, openwebui.

docker pull ghcr.io/open-webui/open-webui:main

docker run -d -p 3000:8080 -v open-webui:/app/backend/data --name open-webui ghcr.io/open-webui/open-webui:main

bsub |

Submit job. Can either provide full arguments or a .bsub script file. |

bsub -b "HH:MM" ... |

Pre-schedule job to specified time. |

bjobs |

List user jobs. bjobs -l <job id> display details about job. Use -w or -W for untruncated output. |

bkill -l <job id> |

Kill job (-r flag force-kills immediately) |

battach -L /bin/bash <job id> |

Attach to running interactive session. |

blimits -u <username> |

Check compute resource quota for user. |

bqueues [-l <queue name>], qstat

|

Shoe available queues and their running / pending job counts. |

btop |

Move a pending job to top of (per user) scheduling order. |

bpeek <job id> |

view stdout from job. -f attaches shows output continuously using tail. |

bmgroup [-w <queue_name>] |

Show hosts queue affinity. bmgroup -w <queue name> | tr ' ' '\n' | grep rng | wc -l get hosts count on queue. |

bhosts |

Show hosts list |

bmod -M <memory limit> -W <runtime limit> <other args> |

Modify job resource and run-time limits. NOTE: this tends to reset other settings such as affinity. |

bstop <job id> and bresume <job id>

|

Suspends and resumes a job, can be used on running or pending jobs. |

squota <directory> |

Shows quota usage information for containing network share. |

| command | module | description |

|---|---|---|

iquota, quota_advisor

|

quota |

|

ncdu |

||

mc, tmux, gcc, boost, cuda, conda

|

| command | description |

|---|---|

/usr/lpp/mmfs/bin/mmlsquota -j <drive> --block-size G rng-gpu01 /usr/lpp/mmfs/bin/mmlsattr -L <drive>

|

Check quota |

bsub -gpu='num=<num gpus>:mode=exclusive_process:mps=no:aff=no' |

Schedules quicker than without the arguments. |

bsub -gpu='num=<num gpus>:mig=<mig slices>' |

Mig usage (A100, H100 and on.) |