Newton's method - avachon100501/ultrafractalcollection GitHub Wiki

Perhaps one of the most commonly used root-finding methods, Newton's method, also known as the Newton-Raphson method, named after Isaac Newton and Joseph Raphson, is a root-finding algorithm which produces successively better approximations to the roots (or zeroes) of a real-valued function. The most basic version starts with a single-variable function f defined for a real variable x, the function's derivative f', and an initial guess x0 for a root of f. If the function satisfies assumptions and the initial guess is close, then x1 = x0 - f(x0)/f'(x0) is a better approximation of the root than x0. Geometrically, (x1, 0) is the intersection of the x-axis and the tangent of the graph of f at (x0, f(x0)): that is, the improved guess is the unique root of the linear approximation at the initial point. The process is repeated as xn+1 = xn - f(xn)/f'(xn) until a sufficiently precise value is reached. This algorithm is first in the class of Householder's methods, succeeded by Halley's method. The method can also be extended to complex functions and to systems of equations.

History

The name "Newton's method" is derived from Isaac Newton's description of a special case of the method in De analysi per aequationes numero terminorum infinitas (written in 1669, published in 1711 by William Jones) and in De metodis fluxionum et serierum infinitarum (written in 1671, translated and published as Method of Fluxions in 1736 by John Colson). However, his method differs substantially from the modern method given above. Newton applied the method only to polynomials, starting with an initial root estimate and extracting a sequence of error corrections. He used each correction to rewrite the polynomial in terms of the remaining error, and then solved for a new correction by neglecting higher-degree terms. He did not explicitly connect the method with derivatives or present a general formula. Newton applied this method to both numerical and algebraic problems, producing Taylor series in the latter case.

Newton may have derived his method from a similar but less precise method by Vieta. The essence of Vieta's method can be found in the work of the Persian mathematician Sharaf al-Din al-Tusi, while his successor Jamshīd al-Kāshī used a form of Newton's method to solve xP − N = 0 to find roots of N (Ypma 1995). A special case of Newton's method for calculating square roots was known since ancient times and is often called the Babylonian method.

Newton's method was used by 17th-century Japanese mathematician Seki Kōwa to solve single-variable equations, though the connection with calculus was missing.

Newton's method was first published in 1685 in A Treatise of Algebra both Historical and Practical by John Wallis. In 1690, Joseph Raphson published a simplified description in Analysis aequationum universalis. Raphson also applied the method only to polynomials, but he avoided Newton's tedious rewriting process by extracting each successive correction from the original polynomial. This allowed him to derive a reusable iterative expression for each problem. Finally, in 1740, Thomas Simpson described Newton's method as an iterative method for solving general nonlinear equations using calculus, essentially giving the description above. In the same publication, Simpson also gives the generalization to systems of two equations and notes that Newton's method can be used for solving optimization problems by setting the gradient to zero.

Arthur Cayley in 1879 in The Newton–Fourier imaginary problem was the first to notice the difficulties in generalizing Newton's method to complex roots of polynomials with degree greater than 2 and complex initial values. This opened the way to the study of the theory of iterations of rational functions.

Description

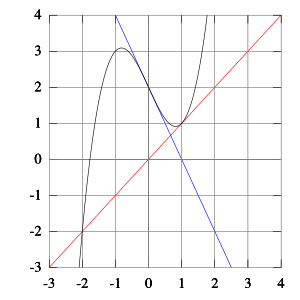

The function f is shown in blue and the tangent line is in red. We see that xn+1 is a better approximation than xn for the root x of the function f.

The idea is to start with an initial guess which is reasonably close to the true root, then to approximate the function by its tangent line using calculus, and finally to compute the x-intercept of this tangent line by elementary algebra. This x-intercept will typically be a better approximation to the original function's root than the first guess, and the method can be iterated.

More formally, suppose f: (a, b) → ℝ is a differentiable function defined on the interval (a, b) with values in the real numbers ℝ, and we have some current approximation xn. Then we can derive the formula for a better approximation, xn + 1 by referring to the diagram on the right. The equation of the tangent line to the curve y = f(x) at x = xn is y = f'(xn)(x - xn) + f(xn) where f' denotes the derivative. The x-intercept of this line (the value of x which makes y = 0) is taken as the next approximation, xn+1, to the root, so that the equation of the tangent line is satisfied when (x, y) = (xn+1, 0): 0 = f'(xn)(xn+1 - xn) + f(xn). Solving for xn+1 gives xn+1 = xn - f(xn)/f'(xn).

We start the process with some arbitrary initial value x0. (The closer to the zero, the better. But, in the absence of any intuition about where the zero might lie, a "guess and check" method might narrow the possibilities to a reasonably small interval by appealing to the intermediate value theorem.) The method will usually converge, provided this initial guess is close enough to the unknown zero, and that f′(x0) ≠ 0. Furthermore, for a zero of multiplicity 1, the convergence is at least quadratic (see rate of convergence) in a neighborhood of the zero, which intuitively means that the number of correct digits roughly doubles in every step. More details can be found in the analysis section below.

Householder's methods are similar but have higher order for even faster convergence. However, the extra computations required for each step can slow down the overall performance relative to Newton's method, particularly if f or its derivatives are computationally expensive to evaluate. For a fractal, this means that the higher order it is, there are going to be less interesting patterns.

Practical considerations

Newton's method is an extremely powerful technique — in general the convergence is quadratic: as the method converges on the root, the difference between the root and the approximation is squared (the number of accurate digits roughly doubles) at each step. However, there are some difficulties with the method.

Difficulty in calculating derivative of a function

Newton's method requires that the derivative can be calculated directly. An analytical expression for the derivative may not be easily obtainable or could be expensive to evaluate. In these situations, it may be appropriate to approximate the derivative by using the slope of a line through two nearby points on the function. Using this approximation would result in something like the secant method whose convergence is slower than that of Newton's method.

Alternatively, one could also use Steffensen's method, achieving quadratic convergence without the need for a derivative.

Failure of the method to converge to the root

It is important to review the proof of quadratic convergence of Newton's method before implementing it. Specifically, one should review the assumptions made in the proof. For situations where the method fails to converge, it is because the assumptions made in this proof are not met.

Overshoot

If the first derivative is not well behaved in the neighborhood of a particular root, the method may overshoot, and diverge from that root. An example of a function with one root, for which the derivative is not well behaved in the neighborhood of the root, is f(x) = |x|^a, 0 < a < 0.5 for which the root will still be overshot and the sequence of x will diverge. For a = 0.5, the root will still be overshot, but the sequence will oscillate between two values. For 0.5 < a < 1, the root will still be overshot but the sequence will converge, and for a ≥ 1 the root will not be overshot at all.

In some cases, Newton's method can be stabilized by using successive over-relaxation, or the speed of convergence can be increased by using the same method.

Stationary point

If a stationary point of the function is encountered, the derivative is zero and the method will fail and terminate due to division by zero.

Poor initial estimate

A large error in the initial estimate can contribute to non-convergence of the algorithm. To overcome this problem one can often linearize the function that is being optimized using calculus, logs, differentials, or even using evolutionary algorithms, such as the stochastic tunneling. Good initial estimates lie close to the final globally optimal parameter estimate. In nonlinear regression, the sum of squared errors (SSE) is only "close to" parabolic in the region of the final parameter estimates. Initial estimates found here will allow the Newton–Raphson method to quickly converge. It is only here that the Hessian matrix of the SSE is positive and the first derivative of the SSE is close to zero.

Mitigation of non-convergence

In a robust implementation of Newton's method, it is common to place limits on the number of iterations, bound the solution to an interval known to contain the root, and combine the method with a more robust root finding method.

Slow convergence for roots of multiplicity greater than 1

If the root being sought has multiplicity greater than one, the convergence rate is merely linear (errors reduced by a constant factor at each step) unless special steps are taken. When there are two or more roots that are close together then it may take many iterations before the iterates get close enough to one of them for the quadratic convergence to be apparent. However, if the multiplicity m of the root is known, the following modified algorithm preserves the quadratic convergence rate: xn+1 = xn - m * f(xn)/f'(xn). This is equivalent to using successive over-relaxation. On the other hand, if the multiplicity m of the root is not known, it is possible to estimate m after carrying out one or two iterations, and then use that value to increase the rate of convergence.

Failure analysis

Newton's method is only guaranteed to converge if certain conditions are satisfied. If the assumptions made in the proof of quadratic convergence are met, the method will converge. For the following subsections, failure of the method to converge indicates that the assumptions made in the proof were not met.

Bad starting points

In some cases the conditions on the function that are necessary for convergence are satisfied, but the point chosen as the initial point is not in the interval where the method converges. This can happen, for example, if the function whose root is sought approaches zero asymptotically as x goes to ∞ or −∞. In such cases a different method, such as bisection, should be used to obtain a better estimate for the zero to use as an initial point.

Iteration point is stationary

Consider the function: f(x) = 1 - x^2.

It has a maximum at x = 0 and solutions of f(x) = 0 at x = ±1. If we start iterating from the stationary point x0 = 0 (where the derivative is zero), x1 will be undefined, since the tangent at (0,1) is parallel to the x-axis: x1 = x0 - f(x0)/f'(x0) = 0 - (1/0).

The same issue occurs if, instead of the starting point, any iteration point is stationary. Even if the derivative is small but not zero, the next iteration will be a far worse approximation.

Starting point enters a cycle/infinite loop

The tangent lines of x^3 − 2x + 2 at 0 and 1 intersect the x-axis at 1 and 0 respectively, illustrating why Newton's method oscillates between these values for some starting points.

For some functions, some starting points may enter an infinite cycle, preventing convergence. Let f(x) = x^3 - 2x + 2 and take 0 as the starting point. The first iteration produces 1 and the second iteration returns to 0 so the sequence will alternate between the two without converging to a root. In fact, this 2-cycle is stable: there are neighborhoods around 0 and around 1 from which all points iterate asymptotically to the 2-cycle (and hence not to the root of the function). In general, the behavior of the sequence can be very complex (see Newton fractal). The real solution of this equation is −1.76929235….

Derivative issues

If the function is not continuously differentiable in a neighborhood of the root then it is possible that Newton's method will always diverge and fail, unless the solution is guessed on the first try.

Derivative does not exist at root

A simple example of a function where Newton's method diverges is trying to find the cube root of zero. The cube root is continuous and infinitely differentiable, except for x = 0, where its derivative is undefined: f(x) = cbrt(x).

For any iteration point xn, the next iteration point will be:

xn+1 = xn - f(xn)/f'(xn) = xn - (xn^(1/3)/((1/3)*xn^((1/3)-1)) = xn - 3xn = -2xn.

The algorithm overshoots the solution and lands on the other side of the y-axis, farther away than it initially was; applying Newton's method actually doubles the distances from the solution at each iteration.

In fact, the iterations diverge to infinity for every f(x) = |x|^α, where 0 < α < 0.5. In the limiting case of α = 0.5 (square root), the iterations will alternate indefinitely between points x0 and −x0, so they do not converge in this case either.

Discontinuous derivative

If the derivative is not continuous at the root, then convergence may fail to occur in any neighborhood of the root. Consider the function

f(x) = 0 (if x = 0), f(x) = x + x^2*sin(2/x) (if x ≠ 0). It's derivative is: f'(x) = 1 (if x = 0), f'(x) = 1 + 2x * sin(2/x) - 2*cos(2/x) (if x ≠ 0).

Within any neighborhood of the root, this derivative keeps changing sign as x approaches 0 from the right (or from the left) while f(x) ≥ x − x^2 > 0 for 0 < x < 1.

So f(x)/f′(x) is unbounded near the root, and Newton's method will diverge almost everywhere in any neighborhood of it, even though:

- the function is differentiable (and thus continuous) everywhere;

- the derivative at the root is nonzero;

- f is infinitely differentiable except at the root; and

- the derivative is bounded in a neighborhood of the root (unlike f(x)/f′(x)).

Non-quadratic convergence

In some cases the iterates converge but do not converge as quickly as promised. In these cases simpler methods converge just as quickly as Newton's method.

Zero derivative

If the first derivative is zero at the root, then convergence will not be quadratic. Let f(x) = x^2 then f'(x) = 2x and consequently x - f(x)/f'(x) = x/2.

So convergence is not quadratic, even though the function is infinitely differentiable everywhere.

Similar problems occur even when the root is only "nearly" double. For example, let f(x) = x^2(x - 1000) + 1.

Then the first few iterations starting at x0 = 1 are

x0 = 1

x1 = 0.500250376…

x2 = 0.251062828…

x3 = 0.127507934…

x4 = 0.067671976…

x5 = 0.041224176…

x6 = 0.032741218…

x7 = 0.031642362…

it takes six iterations to reach a point where the convergence appears to be quadratic.

No second derivative

If there is no second derivative at the root, then convergence may fail to be quadratic. Let f(x) = x + x^(4/3).

Then f'(x) = 1 + (4/3)x^(1/3).

And f''(x) = (4/9)*x^(-2/3) except when x = 0 where it is undefined. Given xn, xn+1 = xn - f(xn)/f'(xn) = ((1/3)*xn^(4/3))/(1 + (4/3)*xn^(1/3))

which has approximately 4/3 times as many bits of precision as xn has. This is less than the 2 times as many which would be required for quadratic convergence. So the convergence of Newton's method (in this case) is not quadratic, even though: the function is continuously differentiable everywhere; the derivative is not zero at the root; and f is infinitely differentiable except at the desired root.

Failure analysis

Newton's method is only guaranteed to converge if certain conditions are satisfied. If the assumptions made in the proof of quadratic convergence are met, the method will converge. For the following subsections, failure of the method to converge indicates that the assumptions made in the proof were not met.

Bad starting points

Newton's method is only guaranteed to converge if certain conditions are satisfied. If the assumptions made in the proof of quadratic convergence are met, the method will converge. For the following subsections, failure of the method to converge indicates that the assumptions made in the proof were not met.

Iteration point is stationary

Conside the function f(x) = 1 - x^2. It has a maximum at x = 0 and solutions of f(x) = 0 at x = ±1. If we start iterating from the stationary point x0 = 0 (where the derivative is zero), x1 will be undefined, since the tangent at (0,1) is parallel to the x-axis:

x1 = x0 - (f(x0)/f'(x0)) = 0 - 1/0.

The same issue occurs if, instead of the starting point, any iteration point is stationary. Even if the derivative is small but not zero, the next iteration will be a far worse approximation.

Starting point enters a cycle

The tangent lines of x^3 - 2x + 2 at 0 and 1 intersect the x-axis at 1 and 0 respectively, illustrating why Newton's method oscillates between these values for some starting points.

For some functions, some starting points may enter an infinite cycle, preventing convergence. Let

f(x) = x^3 - 2x + 2

and take 0 as the starting point. The first iteration produces 1 and the second iteration returns to 0 so the sequence will alternate between the two without converging to a root. In fact, this 2-cycle is stable: there are neighborhoods around 0 and around 1 from which all points iterate asymptotically to the 2-cycle (and hence not to the root of the function). In general, the behavior of the sequence can be very complex. The real solution of this equation is −1.76929235...

Derivative issues

If the function is not continuously differentiable in a neighborhood of the root then it is possible that Newton's method will always diverge and fail, unless the solution is guessed on the first try.

Derivative does not exist at root

A simple example of a function where Newton's method diverges is trying to find the cube root of zero. The cube root is continuous and infinitely differentiable, except for x = 0, where its derivative is undefined:

f(x) = x^(1/3)

For any iteration point xn, the next iteration point will be:

x_n+1 = x_n - f(x_n)/f'(x_n) = x_n - (x_n^(1/3)/((1/3) x_n^((1/3)-1)) = x_n - 3x_n = -2x_n.

The algorithm overshoots the solution and lands on the other side of the y-axis, farther away than it initially was; applying Newton's method actually doubles the distances from the solution at each iteration.

In fact, the iterations diverge to infinity for every f(x) = |x|^α, where 0 < α < 1/2. In the limiting case of α = 1/2 (square root), the iterations will alternate indefinitely between points x0 and −x0, so they do not converge in this case either.

Discontinuous derivative

If the derivative is not continuous at the root, then convergence may fail to occur in any neighborhood of the root. Consider the function

f(x) = 0 (if x = 0), f(x) = x + x^2 sin(2/x) (if x ≠ 0).

Its derivative is:

f'(x) = 1 (if x = 0), f'(x) = 1 + 2x sin(2/x) - 2 cos(2/x) (if x ≠ 0).

Within any neighborhood of the root, this derivative keeps changing sign as x approaches 0 from the right (or from the left) while f (x) ≥ x − x2 > 0 for 0 < x < 1.

So f(x)/f′(x) is unbounded near the root, and Newton's method will diverge almost everywhere in any neighborhood of it, even though:

- the function is differentiable (and thus continuous) everywhere;

- the derivative at the root is nonzero;

- f is infinitely differentiable except at the root;

- and the derivative is bounded in a neighborhood of the root (unlike f(x)/f′(x)).

Non-quadratic convergence

In some cases the iterates converge but do not converge as quickly as promised. In these cases simpler methods converge just as quickly as Newton's method.

Zero derivative

If the first derivative is zero at the root, then convergence will not be quadratic. Let f(x) = x^2 then f'(x) = 2x and consequently

x - f(x)/f'(x) = x/2.

So convergence is not quadratic, even though the function is infinitely differentiable everywhere.

Similar problems occur even when the root is only "nearly" double. For example, let

f(x) = x^2 (x - 1000) + 1.

Then the first few iterations starting at x0 = 1 are

x0 = 1

x1 = 0.500250376...

x2 = 0.251062828...

x3 = 0.127507934...

x4 = 0.067671976...

x5 = 0.041224176...

x6 = 0.032741218...

x7 = 0.031642362...

it takes six iterations to reach a point where the convergence appears to be quadratic.

No second derivative

If there is no second derivative at the root, then convergence may fail to be quadratic. Let

f(x) = x + x^(4/3).

Then

f'(x) = 1 + (4/3)x^(1/3).

And

f''(x) = (4/9)x^(-2/3)

except when x = 0 where it is undefined. Given xn,

x_n+1 = x_n - f(x_n)/f'(x_n) = ((1/3)x_n^(4/3))/(1 + (4/3)x_n^(1/3)

which has approximately 4/3 times as many bits of precision as xn has. This is less than the 2 times as many which would be required for quadratic convergence. So the convergence of Newton's method (in this case) is not quadratic, even though: the function is continuously differentiable everywhere; the derivative is not zero at the root; and f is infinitely differentiable except at the desired root.

How fractals are generated

To represent fractals, we have to rely on the complex plane. Hence, x is not the only number we will need - x is only the real number, but what about the imaginary number? It shall be denoted as y. An imaginary number is a complex number that can be written as a real number multiplied by the imaginary unit i, which is equal to the square root of -1. The most commonly used complex number is z = x + yi. When applying that to Newton's method, it yields: zn+1 = zn - p(zn)/p'(zn). When plotting points to the function, it forms an image, a boundary set in the complex plane. It forms what is called a Julia set. When there are no attractive cycles (of order greater than 1), it divides the complex plane into regions G_k, each of which is associated with a root zeta_k of the polynomial, k = 1,...,deg(p). In this way the Newton fractal is similar to the Mandelbrot set, and like other fractals it exhibits an intricate appearance arising from a simple description. It is relevant to numerical analysis because it shows that (outside the region of quadratic convergence) the Newton method can be very sensitive to its choice of start point.

Newton fractal for three third-degree roots (p(z) = z^3 - 1).

Generalization of Newton fractals

A generalization of Newton's iteration is zn+1 = zn - a * (p(zn)/p'(zn)) where a is any complex number. The special choice a = 1 corresponds to the Newton fractal. The fixed points of this map are stable when a lies inside the disk of radius 1 centered at 1. When a is outside this disk, the fixed points are locally unstable, however the map still exhibits a fractal structure in the sense of Julia set. If p is a polynomial of degree d, then the sequence zn is bounded provided that a is inside a disk of radius d centered at d.

More generally, Newton's fractal is a special case of a Julia set.

When dealing with complex functions, Newton's method can be directly applied to find their zeroes. Each zero has a basin of attraction in the complex plane, the set of all starting values that cause the method to converge to that particular zero. These sets can be mapped as in the image shown. For many complex functions, the boundaries of the basins of attraction are fractals.

In some cases there are regions in the complex plane which are not in any of these basins of attraction, meaning the iterates do not converge. For example, if one uses a real initial condition to seek a root of x2 + 1, all subsequent iterates will be real numbers and so the iterations cannot converge to either root, since both roots are non-real. In this case almost all real initial conditions lead to chaotic behavior, while some initial conditions iterate either to infinity or to repeating cycles of any finite length.

Curt McMullen has shown that for any possible purely iterative algorithm similar to Newton's method, the algorithm will diverge on some open regions of the complex plane when applied to some polynomial of degree 4 or higher. However, McMullen gave a generally convergent algorithm for polynomials of degree 3.

Newton fractal for the function p(z) = z^8 + 15z^4 - 16

Newton fractal for the arctangent function (p(z) = atan(z))

This fractal is a variant of Newton's method, for the function p(z) = z^3 + (c - 1) * z - c, yielding some miniature Mandelbrot sets (minibrots).

The same fractal, zoomed in, with the minibrot more clear in detail.

Sources: Wikipedia

Fractals rendered with Ultra Fractal 6.