2020 09 06 Specificity plots - WojciechMigda/TruRL GitHub Wiki

Run parameters:

Episodes: 1000

max_episode_steps: 200

TsetliniClassifierBitwise({

"threshold": 32000,

"s": <#####>,

"number_of_regressor_clauses": 3200,

"number_of_states": 127,

"boost_true_positive_feedback": 1,

"random_state": 1,

"n_jobs": 2,

"clause_output_tile_size": 16,

"weighted": true,

"verbose": false

})

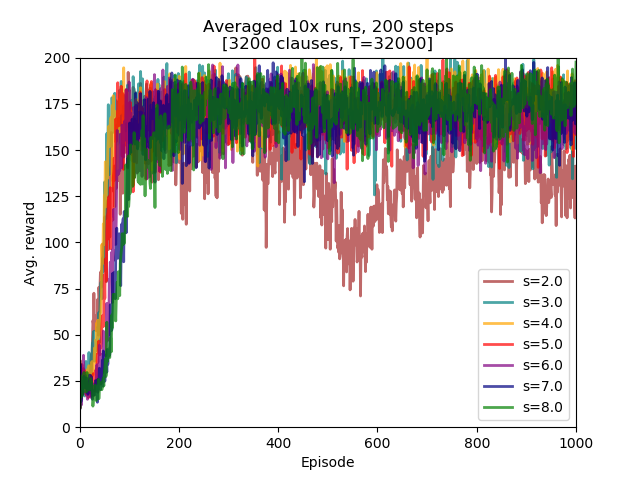

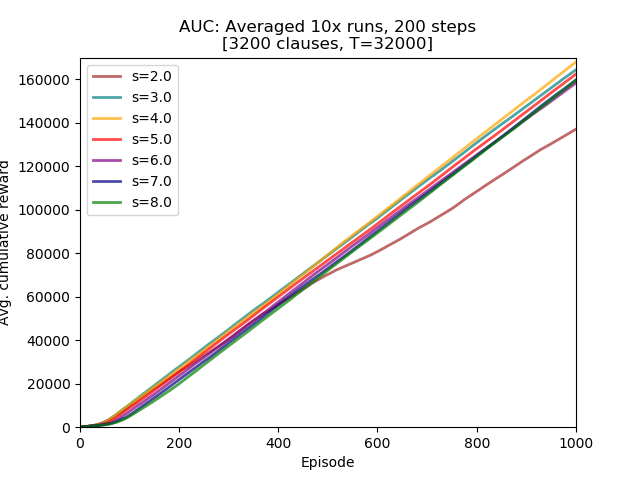

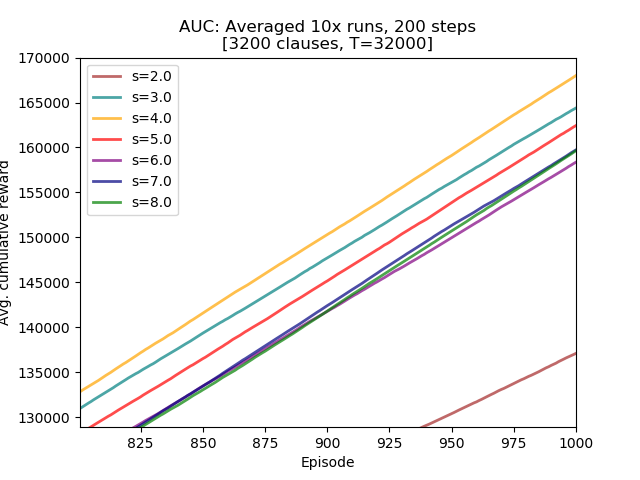

Seven specificity values were examined: 2.0, 3.0, 4.0, 5.0, 6.0, 7.0, and 8.0.

Conclusions:

- the smallest specificity exhibited some learning instability,

- the best performing specificity value equals 4.0, the second best is 3.0,

- observed learning performance dependency is different compared to experiments with T=16000 in which the top performing s value was 8.0,

steps_200_s.py

#!/usr/bin/python3

# -*- coding: utf-8 -*-

import plac

import numpy as np

import pandas as pd

def main():

df = pd.read_csv('steps_200_s.csv', header=None, names=['2.0', '3.0','4.0', '5.0', '6.0', '7.0', '8.0'])

import matplotlib.pyplot as plt

plt.figure()

lw = 2

plt.plot(df.index + 1, df['2.0'], lw=lw, color='brown', alpha=0.7, label='s=2.0')

plt.plot(df.index + 1, df['3.0'], lw=lw, color='teal', alpha=0.7, label='s=3.0')

plt.plot(df.index + 1, df['4.0'], lw=lw, color='orange', alpha=0.7, label='s=4.0')

plt.plot(df.index + 1, df['5.0'], lw=lw, color='red', alpha=0.7, label='s=5.0')

plt.plot(df.index + 1, df['6.0'], lw=lw, color='purple', alpha=0.7, label='s=6.0')

plt.plot(df.index + 1, df['7.0'], lw=lw, color='navy', alpha=0.7, label='s=7.0')

plt.plot(df.index + 1, df['8.0'], lw=lw, color='green', alpha=0.7, label='s=8.0')

plt.xlabel("Episode")

plt.ylabel("Avg. reward")

plt.xlim(1, 1000)

plt.ylim(0, 200)

plt.title("Averaged 10x runs, 200 steps\n[3200 clauses, T=32000]")

plt.legend(loc='lower right')

plt.show()

return 0

if __name__ == '__main__':

plac.call(main)steps_200_s_AUC.py

#!/usr/bin/python3

# -*- coding: utf-8 -*-

import plac

import numpy as np

import pandas as pd

def main():

df = pd.read_csv('steps_200_s.csv', header=None, names=['2.0', '3.0','4.0', '5.0', '6.0', '7.0', '8.0'])

df = df.cumsum(axis=0)

import matplotlib.pyplot as plt

plt.figure()

lw = 2

plt.plot(df.index + 1, df['2.0'], lw=lw, color='brown', alpha=0.7, label='s=2.0')

plt.plot(df.index + 1, df['3.0'], lw=lw, color='teal', alpha=0.7, label='s=3.0')

plt.plot(df.index + 1, df['4.0'], lw=lw, color='orange', alpha=0.7, label='s=4.0')

plt.plot(df.index + 1, df['5.0'], lw=lw, color='red', alpha=0.7, label='s=5.0')

plt.plot(df.index + 1, df['6.0'], lw=lw, color='purple', alpha=0.7, label='s=6.0')

plt.plot(df.index + 1, df['7.0'], lw=lw, color='navy', alpha=0.7, label='s=7.0')

plt.plot(df.index + 1, df['8.0'], lw=lw, color='green', alpha=0.7, label='s=8.0')

plt.xlabel("Episode")

plt.ylabel("Avg. cumulative reward")

plt.xlim(1, 1000)

plt.ylim(0, 170000)

plt.title("AUC: Averaged 10x runs, 200 steps\n[3200 clauses, T=32000]")

plt.legend(loc='upper left')

plt.show()

return 0

if __name__ == '__main__':

plac.call(main)Location: /experiments/2020-09-05_step200_s

Logs were created by running /experiments/run.sh script (invocation parameters hardcoded inside). Logs were transformed into CSV file with averaged runs by executing /experiments/run_csv.py:

../run_csv.py steps_200_s.csv run_test10.log run_test9.log run_test7.log run_test2.log run_test3.log run_test4.log run_test6.log

1374ed2fd4541a8ce76a1daced462c01f166c55a