Home - SteveJustin1963/Telescope-Tec1 GitHub Wiki

also known as reflecting telescopes or Newtonian telescopes, are a type of optical telescope that uses mirrors to gather and focus light. They have a fascinating history, work on a principle different from refractor telescopes, and are often associated with the Dobsonian mount, which makes them more accessible for amateur astronomers.

History of the Reflector Telescope: The reflector telescope was invented by Sir Isaac Newton in 1668. Newton was trying to address some of the limitations of the refracting telescopes of his time, which used lenses to bend and focus light. These refractors suffered from chromatic aberration, which resulted in colored fringes around objects and reduced image clarity.

Newton's innovation was to replace the lens at the front of the telescope with a curved mirror, known as the primary mirror. This mirror reflected light to a focus point, where a smaller, flat mirror, known as the secondary mirror, redirected the light to an eyepiece or camera. This design eliminated chromatic aberration, offering superior image quality compared to refractors.

How a Reflector Telescope Works: A reflector telescope works on the principle of reflection rather than refraction. Here's a step-by-step explanation of how it functions:

-

Primary Mirror: Light from a distant object enters the telescope and strikes the primary mirror, which is typically a concave, parabolic mirror. This mirror collects and reflects the incoming light toward a focus point.

-

Secondary Mirror: The primary mirror's focus point is where the secondary mirror is placed. The secondary mirror is flat and positioned at an angle to reflect the focused light out of the side of the telescope.

-

Eyepiece or Camera: The focused light then travels to an eyepiece or camera at the side of the telescope tube. The eyepiece magnifies the image formed by the primary and secondary mirrors, allowing the observer to view the object.

-

Adjustable Components: Reflector telescopes often have adjustable components, such as the primary mirror's tilt or the secondary mirror's position, to fine-tune the focus and collimation (alignment of optical elements) for optimal image quality.

The Dobsonian Telescope: The Dobsonian telescope, or "Dob" for short, is not a type of telescope but rather a type of mounting system for reflector telescopes. It was popularized by amateur astronomer John Dobson in the 1960s. The Dobsonian mount is known for its simplicity and stability, making it an excellent choice for amateur astronomers.

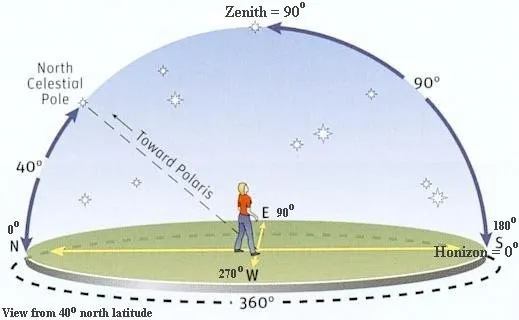

When it comes to tracking a star, equatorial mounts are generally better than altazimuth mounts for several reasons:

- Equatorial Mount: Once polar aligned, an equatorial mount can track a star across the sky by moving along one axis (right ascension), which directly corresponds to the rotation of the Earth. This makes tracking very smooth and efficient, especially for long exposures in astrophotography.

- Altazimuth Mount: An altazimuth mount tracks objects by moving in two axes (altitude and azimuth), which does not correspond to the Earth's rotation. As a result, both axes must be constantly adjusted to track a star, which can be more complex and less smooth.

- Equatorial Mount: It eliminates field rotation, a phenomenon where stars appear to rotate around the center of the field of view over time. This makes equatorial mounts ideal for long-exposure astrophotography, where maintaining precise alignment is crucial.

- Altazimuth Mount: Field rotation can be a significant issue with altazimuth mounts during long exposures. Specialized software or hardware like a field derotator is often required to compensate for this.

- Equatorial Mount: Polar alignment might seem complex initially, but once done correctly, it simplifies tracking immensely. Modern mounts often have built-in polar alignment tools that make this process easier.

- Altazimuth Mount: There's no need for polar alignment, which can make setup quicker for casual observation, but this comes at the cost of more complex tracking.

- Equatorial Mount: Generally preferred by serious astronomers and astrophotographers due to its precision and capability for long-term tracking.

- Altazimuth Mount: More user-friendly and often cheaper, making it a good choice for beginners or casual observers, but less suitable for advanced tracking or photography.

In summary, an equatorial mount is better for tracking a star because it allows for more accurate and smoother tracking with minimal adjustments, particularly beneficial for long-exposure astrophotography.

In the context of optical systems like telescopes and cameras, the term "speed" refers to the ability of a lens or mirror to gather light. Specifically, it indicates how quickly a lens or mirror can collect enough light to form a sufficiently exposed image. The F-number, or F-ratio, is crucial in determining this characteristic.

- F/number (Focal Ratio): The F/number is calculated as the ratio of the focal length of the lens or mirror to its aperture diameter. In your case, with a 200mm (8 inches) aperture and a 1000mm (one meter) focal length, the F/number is 5 (F5). This is calculated by dividing the focal length by the aperture diameter (1000mm/200mm = 5).

- Faster Light Gathering: A lower F/number means a larger aperture relative to the focal length. This larger aperture allows more light to enter the system at once, thus "faster" in terms of light gathering. For example, an F5 system is faster than an F10 system because it can gather the same amount of light in less time.

- Brighter Images: Since more light can be gathered quickly, the resulting images can be brighter, which is especially beneficial in low-light conditions such as astronomical observations or indoor photography without flash.

- Shallower Depth of Field: In photography, a lower F/number also results in a shallower depth of field, which means that less of the area in front of and behind the focus point will appear in focus. This effect is used creatively to focus attention on a subject while blurring the background and foreground.

In telescopes, a "fast" mirror (like an F5) is particularly advantageous for deep-sky observing and astrophotography. It allows the observer to capture images of faint celestial objects more quickly than a "slow" mirror (like an F10), as it collects light more efficiently. This is beneficial when observing nebulae, galaxies, and other distant objects that require significant light to detect and photograph effectively.

In summary, the "speed" of an optical system is fundamentally about how efficiently and quickly it can gather light, influenced directly by its F/number. A lower F/number indicates a faster system capable of producing brighter images in shorter amounts of time.

-

Altitude-Azimuth Mount: Dobsonian telescopes use a simple alt-azimuth mount that allows for easy manual tracking of celestial objects. This makes them user-friendly, especially for beginners.

-

Sturdy Base: Dobsonian mounts have a stable and low-profile base, which makes them easy to set up and move around. They are often made from materials like wood or metal.

-

Large Aperture: Many Dobsonian telescopes feature large primary mirrors, providing excellent light-gathering capabilities, which make them suitable for deep-sky observations.

In summary, reflector telescopes have a rich history dating back to Sir Isaac Newton and work by using mirrors to gather and focus light. The Dobsonian mount is a popular choice for amateur astronomers, offering ease of use and affordability, particularly for those interested in deep-sky observations.

of a telescope is a measure of how much an object is enlarged by the telescope. A higher magnification allows you to see more detail in an object, but it also makes the image dimmer and reduces the field of view.

The magnification of a telescope is determined by the focal length of the telescope and the focal length of the eyepiece. It is calculated using the formula:

Magnification = (Telescope focal length) / (Eyepiece focal length)

For example, if you have a telescope with a focal length of 1000mm and an eyepiece with a focal length of 25mm, the magnification would be 40x (1000 / 25 = 40).

To see detail in an object, you will need a magnification that is high enough to resolve the details of the object. The amount of magnification you need will depend on the size and distance of the object, as well as the quality of the telescope and eyepiece. In general, a magnification of 50-100x is sufficient to see detail in most objects, but some objects may require higher magnifications. It is important to note that using too much magnification can actually make the image worse, as the image can become distorted and blurry due to atmospheric conditions and the limitations of the telescope and eyepiece.

is a type of optical aberration that occurs when the image of a point object is not focused to a single point, but instead appears as a line or elongated shape. It is caused by the different curvatures of the lens or mirror in a telescope or camera lens. It is most commonly caused by an asymmetry in the curvature of the lens or mirror, which causes light to be focused differently in different directions. Astigmatism can be corrected by using a special lens called a "corrective lens" which compensates for the asymmetry of the primary lens or mirror. Astigmatism is often present in combination with other types of aberrations, such as spherical aberration and chromatic aberration. It can be corrected with a combination of lenses, mirrors, or other optical elements in a telescope or camera lens. It also can be corrected by using special software during image processing. Astigmatism can be measured by using a special device called a "star test" which consists of shining a point source of light through the lens and observing its shape on a screen or detector.

which use mirrors instead of lenses to gather and focus light. In a reflector telescope, astigmatism is caused by the mirror being deformed or not having a perfect parabolic shape, which can cause the light to be focused differently in different directions. This results in an elongated or distorted image. Astigmatism can also be caused by misalignment of the mirror in the telescope's optical train, which can cause the light to be focused differently in different directions. Astigmatism can be corrected in a reflector telescope by adjusting the mirror cell, which holds the mirror in place, to ensure that the mirror is properly aligned and has the correct shape. It can also be corrected by using a special lens or mirror called a "corrective lens" which compensates for the asymmetry of the primary mirror. Additionally, special software can be used during image processing to correct the astigmatism caused by the imperfect shape of the mirror. It's worth mentioning that Astigmatism is not unique to reflectors, refractor telescopes also can have it, but it occurs less frequently in refractors because the lenses are less likely to deform, and also the lens is less likely to be misaligned in the optical train.

- Star test: This is the most common method for testing astigmatism in a reflector telescope. It involves shining a point source of light, such as a bright star, through the telescope and observing the shape of the image on a screen or detector. If the image is elongated or distorted, it is likely that the telescope has astigmatism.

- Knife-edge test: This test involves placing a thin blade or wire across the telescope's aperture and observing the shape of the shadow it casts. If the shadow is distorted or elongated, it is likely that the telescope has astigmatism.

- Ronchi test: This test involves using a special device called a Ronchi grating, which consists of a series of parallel lines, to observe the image of the telescope's aperture. If the image is distorted or elongated, it is likely that the telescope has astigmatism.

- Foucault test: This test involves shining a point source of light through the telescope and observing the shape of the image on a screen or detector. It is similar to the star test, but it also uses a knife-edge to measure the shape of the shadow that the mirror casts.

It's worth noting that astigmatism testing is best done in the dark, under good seeing conditions, and with a bright star as the target. Also, it is important to keep in mind that astigmatism is not the only aberration that can cause the image to be distorted, so it's best to check for other types of aberrations as well.

https://www.skyatnightmagazine.com/advice/how-to-star-test-a-telescope/

is a component that is placed at the end of a telescope's focuser and is responsible for magnifying the image formed by the telescope's objective lens or primary mirror. The eyepiece works by increasing the apparent size of the image that the objective lens or mirror forms, which makes the image appear closer and larger.

The magnification of an eyepiece is determined by the ratio of the telescope's focal length to the focal length of the eyepiece.

For example,

- an eyepiece with a focal length of 10 mm

- used in a telescope with a focal length of 1000 mm

- would have a magnification of 100x (1000/10).

This means that an object viewed through the telescope would appear

100 times closerthan it would with the naked eye.

Field of View (FOV) is a fundamental concept in optical instruments such as telescopes. It describes the extent of the observable sky or scene at any given instance. Depending on the context, FOV is commonly expressed in degrees, arcminutes, or arcseconds. Several factors influence the FOV, including the telescope's focal length, the size of the eyepiece, and the inherent FOV of the eyepiece itself.

-

A broader FOV translates to a wider sky or scene observation, whereas a narrower FOV offers a detailed, albeit restricted, view.

-

Shorter focal length telescopes, like refractors, generally possess a more extensive FOV. In contrast, longer focal length telescopes, such as reflectors, have a more constricted FOV.

-

The eyepiece plays a significant role in determining FOV. Eyepieces with a more expansive FOV result in broader views, while those with a tighter FOV lead to limited views.

-

For astronomy enthusiasts, FOV indicates the portion of the sky observable at once. For photographers, it dictates the expanse of the scene that can be captured.

Eyepieces are pivotal in establishing FOV and other optical properties. There are multiple eyepiece designs available, such as Plössl, Kellner, and Orthoscopic. Each design has its strengths and weaknesses, especially concerning sharpness, distortion, and FOV.

Some eyepieces come with adjustable eye relief. This refers to the optimal distance between the eyepiece and the viewer's eye to achieve the full FOV. Adjustable eye relief is especially beneficial for those wearing eyeglasses or those who might find it challenging to get close to the eyepiece.

The material from which an eyepiece is made can influence its performance. Generally, eyepieces crafted from high-quality glass deliver superior visual clarity and longevity compared to their plastic counterparts, though they might be pricier.

Observing the vastness of the night sky with a telescope is a breathtaking experience, and one of the factors that significantly impacts what you can see is the True Field of View (TFoV). TFoV tells you the portion of the sky or any distant scene that's visible at a given moment when you look through the telescope using a specific eyepiece. Typically expressed in degrees, a larger TFoV lets you observe a more expansive segment of the sky.

The Apparent Field of View (AFoV) is an intrinsic characteristic of the eyepiece, representing the perceived "width" of the view when peering through just that eyepiece. But to truly discern the section of the sky you'll be observing when combining that eyepiece with a particular telescope, you need to work out the TFoV.

To find TFoV:

[ \text{TFoV (in degrees)} = \frac{\text{AFoV (in degrees)}}{\text{Magnification}} ]

And for magnification, which determines how much larger a celestial object appears compared to its naked-eye observation, you use:

[ \text{Magnification} = \frac{\text{Focal length of the telescope}}{\text{Focal length of the eyepiece}} ]

Let's utilize a reflector telescope with specifications of 200mm aperture and f/5 focal ratio, which translates to a focal length of 1000mm. Pairing this with an eyepiece that has a focal length of 25mm and an AFoV of 50 degrees, we get:

- Calculating the magnification:

[ \text{Magnification} = \frac{1000mm}{25mm} = 40x ]

Objects will appear 40 times larger through this telescope than they would when observed with the naked eye.

- Determining the TFoV:

[ \text{TFoV} = \frac{50 \text{ degrees}}{40} = 1.25 \text{ degrees} ]

So, when you use this eyepiece with your reflector telescope, you'll capture a section of the sky that's 1.25 degrees in width. To put this into perspective, the full moon spans roughly half a degree. This means you'd be gazing upon an area approximately two and a half times wider than the full moon.

In wrapping up, grasping the concept of TFoV is instrumental in setting expectations for what can be seen through a specific telescope and eyepiece combination. It's a key metric for anyone passionate about stargazing, be it a hobbyist or a seasoned astronomer.

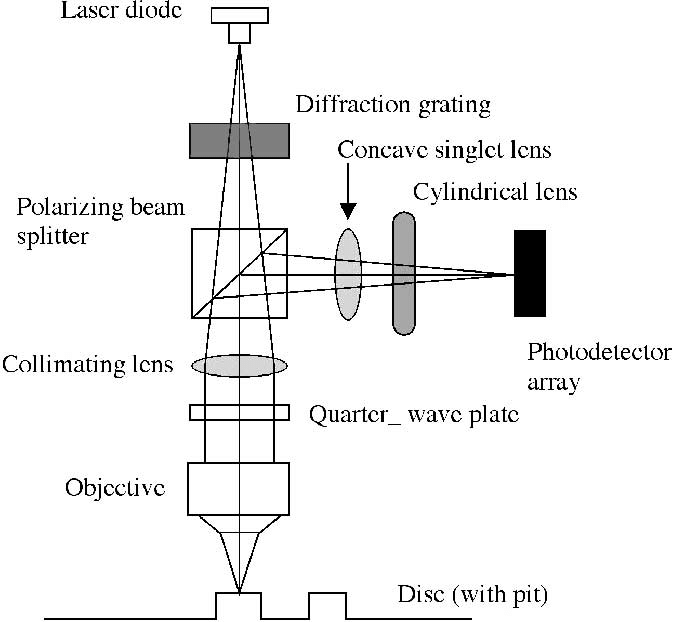

(Compact Disc Read-Only Memory) drive has several important characteristics that are designed to optimise the performance of the drive. Some of these characteristics include:

- Numerical Aperture (NA): The numerical aperture of a lens is a measure of its ability to gather light and focus it onto the disc. A higher numerical aperture means that the lens can gather more light and produce a brighter, more focused image of the disc.

- Focal Length: The focal length of a lens is the distance between the lens and the point where the light is focused. A shorter focal length allows the lens to have a wider field of view, while a longer focal length produces a narrower field of view but can magnify the image more.

- Wavefront Distortion: The lens in a CD-ROM drive must be designed to minimize wavefront distortion, which is the deviation of the shape of a wavefront from a perfect spherical shape. Wavefront distortion can cause the light to be focused incorrectly, resulting in a blurry or distorted image of the disc.

- Spherical Aberration: Spherical aberration is an optical effect that occurs when light passing through a lens is not brought to a perfect focus. This effect can cause a loss of sharpness in the image and make it harder for the drive to read the disc correctly.

- Astigmatism: Astigmatism is another optical effect that occurs when the lens is not able to focus all of the light on a single point. This effect can cause distortion of the image, making it harder for the drive to read the disc correctly.

- Chromatic Aberration: Chromatic Aberration is an effect caused by the lens failing to focus all the different colours of light at the same point. This can cause a colour fringe around the object.

These characteristics are essential to the proper function of a CD-ROM drive. A lens with a high numerical aperture, a short focal length, and minimal wavefront distortion, spherical aberration, astigmatism and chromatic aberration will produce a clear, sharp image of the disc that can be read accurately by the drive.

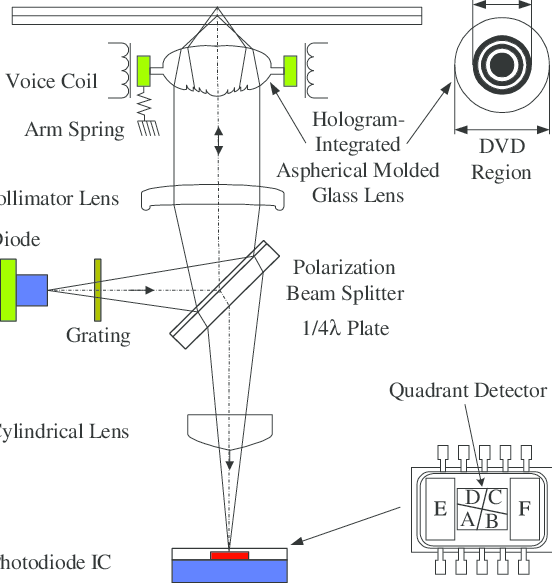

is typically in the range of 6 mm to 15 mm. This is a relatively short focal length which allows the lens to have a wide field of view and to be able to focus on a large area of the disc at once. This is important for a CD-ROM drive because it needs to be able to scan the entire surface of the disc quickly in order to read the data stored on it. The lens in a CD-ROM drive is typically a double-Gauss design which combines two simple lens groups arranged in a negative-positive-negative configuration, this lens design is known to be compact and well suited for wide-angle applications. The numerical aperture of the lens used in a CD-ROM drive is generally around 0.45, this allows it to gather enough light to produce a bright, focused image of the disc. It is important to note that some of the characteristics of the lens such as its focal length and numerical aperture might vary depending on the exact design of the drive, as well as on the production batch, but in general the values stated are within the expected ranges for a CD-ROM drive lens.

is similar to that of a CD-ROM drive, typically ranging from 6 mm to 15 mm. Like CD-ROM drives, DVD drives need to be able to scan a wide area of the disc quickly in order to read the data stored on it, so a wide field of view is important. However, DVD drives use a different technology to read the disc called multi-beam optics. This technology uses multiple, closely spaced beams to scan the disc, allowing the drive to read multiple data tracks at once. This requires a more complex and expensive lens assembly than what is used in CD-ROM drives. The numerical aperture of the lens used in a DVD drive is typically around 0.6 - 0.7 which is a bit higher than CD-ROM drive. This allows it to gather more light and produce a brighter, more focused image of the disc. This is important because DVD disc have much more data and smaller pits than CD and they have to be read accurately.

It is also important to note that DVD drives use a laser diode to read the disc data, and the wavelength of the laser diode used in DVD drives (655nm) is different from the wavelength of the laser diode used in CD-ROM drives (780nm), and that also affects the design of the lens used in DVD drives.

How to Choose an Eyepiece from https://www.bintel.com.au/how-to-choose-an-eyepiece/

Always remember that your eyepiece is half of your telescope – there’s no point buying a Ferrari and then not putting the wheels on! Choosing an eyepiece can be a daunting task, especially if you’re not familiar with how telescope optics work. We sell a range of high-quality eyepieces, with universal sizes to fit in any telescope, regardless of brand. It’s always worth having a variety of focal lengths to provide you with a range of magnification options for you night sky observing, but working out what to get can be a frustrating exercise, and involves mathematics! Please call our full-time staff in Sydney any time for a discussion if you’re having trouble, or simply have a read of our helpful hints below!

1: Focal length, and some simple maths! All eyepieces have a number associated with them – the focal length. The smaller this number, the higher magnification the eyepiece will provide, and vice-versa. You can work out the exact magnification if you know your telescope focal length (look at the specifications of the model you own). For example, a Celestron Nexstar 4SE has a focal length of 1325mm. With a 10mm eyepiece, this telescope will magnify the target 1325/10 = 132.5x! With a 25mm eyepiece, it will magnify 1325/25 = 53x.

2: Don’t go too high! While you can put any eyepiece in any telescope provided the barrel sizing is correct, you should make sure not to zoom too much. Putting an eyepiece providing 400x magnification into a small refractor will give a blurry and fuzzy image, simply because you are stretching the light too far. As a general rule, you should never magnify more than double the aperture of your telescope in millimeters. For example, the lens diameter of a Celestron Nexstar 4SE is 4 inches (~100mm). You should thus never use an eyepiece in a Nexstar 4SE which powers it over 200x. Similarly, if you own a Cosmos 70 AZ (70mm lens diameter), you should not exceed 140x.

3: Make sure to get the right barrel size! Most eyepieces are associated with either 1.25″ or 2″. This refers to the diameter of the eyepiece slot at the end of your telescope. Almost all beginner telescopes use 1.25″ eyepieces, though some SCT and reflector telescopes (particularly higher end models which are convertible for photography) will fit to both sizes. If in doubt, give us a call! If your scope is over 40 years old it could be other sizes.

4: Don’t forget barlows! Rather than buying six different eyepieces to give you six different powers, one option is to invest in a good Barlow lens. Put simply, a Barlow used in conjunction with an eyepiece will increase the power by a set amount. A 2x Barlow will double the power of any eyepiece (eg: turning a 20mm into a 10mm with higher magnification), while a 3x Barlow will triple the power, etc. This means that you can get six different magnifications with only three different eyepieces – very convenient!

5: Quality is important! Eyepieces vary greatly in price, from simple $49 eyepieces, to $1000 Tele Vue branded eyepieces. Naturally, a standard 10mm eyepiece will not have as high light transmission or clarity as a 10mm Tele Vue eyepiece for triple the price, but to get the most out of expensive eyepieces, you need to be putting them in a good telescope! With high light transmission eyepieces, fainter deep space objects like nebulas and galaxies will suddenly be within reach for many observers who previously were not able to resolve detail on these fascinating targets. You can usually spend up to the cost of your telescope on a matching eyepiece (eg: if your telescope is worth $400, you could buy a $400 eyepiece to put in, which would give excellent results). Exceeding this limit would probably be overkill in most cases – if you’re particularly keen, maybe think about investing in a newer, bigger telescope!

Also (https://www.bintel.com.au/product-category/eyepieces-and-barlows/)

wiki: is a formula to express the maximum resolving power of a microscope or telescope.[1] It is so named after its discoverer, William Rutter Dawes ,[2] although it is also credited to Lord Rayleigh.

The formula takes different forms depending on the units.

R = 4.56/D D in inches, R in arcseconds R = 11.6/D D in centimeters, R in arcseconds where D is the diameter of the main lens (aperture) R is the resolving power of the instrument

When observing the moon, reflected starlight consisting of multiple wavelengths must be taken into account. A convenient way to calculate angular resolution is the Dawes' Limit, named after amateur astronomer W.R. Dawes. The limit is based on the smallest separation at which two point sources can be distinguished from one another, and it depends on the aperture of the telescope. The Dawes' Limit for a telescope with an aperture of D in millimeters is 4.56/D in arcseconds. For example

- a 100mm telescope has a Dawes' Limit of approximately 0.46 arcseconds, meaning the smallest detail that can be resolved is around 0.46 arcseconds in size.

- a 200mm F5 telescope reflector has a Dawes' Limit of approximately 0.023 arcseconds. This is the smallest angular resolution at which two point sources can be distinguished.

refers to astronomical objects that emit strong light in the hydrogen-alpha (H-alpha) spectral line. This line corresponds to the transition of an electron from the third energy level to the second energy level of a hydrogen atom, and is usually observed at a wavelength of 656.3 nanometers in the red part of the visible spectrum.

In astronomy, H-alpha emission is often used as a diagnostic of various physical processes occurring in astronomical objects, such as star formation, ionized gas emission, and activity in galactic nuclei. Hence, objects that are considered "H-alpha rich" are typically those that emit a significant amount of light in this line, and are often the focus of study in fields such as astrophysics and cosmology.

you would need to add a specialized filter that blocks out most of the visible light and only allows the H-alpha light to pass through to the camera's sensor. This type of filter is called an H-alpha filter, and it is specifically designed to enhance the camera's ability to capture images of astronomical objects that emit strong H-alpha light.

There are several ways to add an H-alpha filter to your DSLR camera:

- Clip-in filter: This is a filter that can be easily clipped onto the front of your camera lens. The advantage of this type of filter is that it's quick and easy to install, and you can remove it just as easily when you're done with your observations.

- Internal filter: This type of filter is installed inside the camera body, between the lens and the sensor. The advantage of an internal filter is that it's always in place, so you don't have to worry about installing or removing it.

- Dedicated astro-modified camera: Some camera manufacturers offer specialized astro-modified versions of their cameras that include an internal H-alpha filter. These cameras are specifically designed for astrophotography and offer excellent performance when it comes to capturing images of H-alpha rich targets.

Please note that modifying your camera in any way may void its warranty, and it's important to understand the limitations and potential risks associated with this type of modification before proceeding. Additionally, it's also important to consider other factors, such as the quality of your lens and the stability of your mount, that can affect the quality of your astrophotography.

can also improve its performance with H-alpha rich targets. The IR filter is typically installed in front of the camera's sensor to block out IR light, which can cause unwanted artifacts in images taken with the camera. However, this filter also blocks out some of the light in the H-alpha wavelength range, which can reduce the camera's sensitivity to H-alpha light.

By removing the IR filter, the camera's sensitivity to H-alpha light can be increased, allowing it to capture more detailed images of H-alpha rich targets. This type of modification is often referred to as "astro-modification" or "IR-modification," and it is a popular choice among amateur and professional astrophotographers.

However, it's important to note that removing the IR filter from your camera can also result in increased sensitivity to IR light, which can cause unwanted artifacts in your images, such as color shifts and halos around bright objects. Additionally, this type of modification is not recommended for amateur photographers as it requires a high level of technical skill and knowledge, and can result in permanent damage to the camera if not done correctly.

If you're interested in this type of modification, I would recommend seeking the help of a professional camera technician or a specialized astro-modification service. These professionals have the necessary tools and expertise to safely remove the IR filter and modify your camera for optimal performance with H-alpha rich targets.

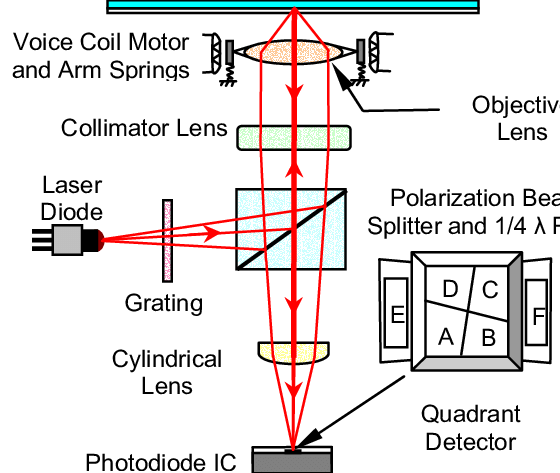

also known as a photodetector array or optical pick-up, is a device used in CD-ROM drives to read the data stored on a compact disc. The array typically consists of a laser diode, a collimator lens, and a photodiode array. The laser diode emits a focused beam of light onto the surface of the disc. The collimator lens is used to ensure that the light beam is parallel and has a consistent width as it travels across the disc. The photodiode array is located behind the collimator lens and is responsible for detecting the reflected light from the disc. The photodiode array is typically made up of several individual photodiodes, each with its own lens and detector. These individual photodiodes are arranged in a line or pattern and work together to detect the light reflected from the disc. As the disc spins, the beam of light scans across the disc, and the photodiode array detects the variations in the reflected light caused by the data stored on the disc. The data is then sent to the drive's electronics, where it is decoded and sent to the computer.

can vary depending on the manufacturer and the type of CD-ROM drive it is used in. Some common specifications include:

- Number of photodiodes: The array typically has several individual photodiodes, with a higher number allowing for a more precise reading of the data on the disc.

- Sensitivity: This is a measure of how well the array can detect light. A higher sensitivity means that the array is able to detect weaker reflections from the disc, which is important for reading data on lower quality discs. Wavelength range: The wavelength range refers to the range of light frequencies that the array can detect. For CD-ROM drives, this is typically in the near infrared range (around 780nm).

- Spectral response: This refers to the range of light frequencies that the array can detect and is typically given as a graph. The size of the array: this depends on the size of the lens used in the CD-ROM drive, some arrays are bigger than others to cover a larger area of the disc.

- Some other specifications that may be provided by the manufacturer include the dark current, noise, and linearity of the array. These specifications can affect the performance of the array and the overall quality of the data read from the disc.

It is possible that a photodiode array used in a CD-ROM drive could be repurposed for photon measurements in astronomy, but it would likely not be the optimal solution. Photodiode arrays used in CD-ROM drives are designed to read data stored on compact discs, which requires a different set of specifications and characteristics than those needed for photon measurements in astronomy.

For example, in astronomy, the measurement should be performed in a low light condition, where a high sensitivity and low noise are important specifications. However, CD-ROM drives are usually optimized for reading data in relatively bright environments. Additionally, the wavelength range and spectral response of a photodiode array used in a CD-ROM drive may not be well-suited for the range of light frequencies typically encountered in astronomical measurements.

In summary, a photodiode array used in a CD-ROM drive would not be an ideal solution for photon measurements in astronomy, but it's not impossible to repurpose it and use it for this specific application with the proper modifications and calibration. it would be advisable to use a dedicated device that is specifically designed for the task of photon measurements.

There are several alternatives to a photodiode array that can be used for astronomical measurements, depending on the specific requirements of the application. Some examples include:

- CCD (charge-coupled device): A CCD is a type of image sensor that can be used to capture images of stars and galaxies. They are widely used in astronomical telescopes and can be sensitive to a wide range of wavelengths, from the ultraviolet to the infrared.

- CMOS (complementary metal-oxide-semiconductor): CMOS sensors are similar to CCDs but they are typically less expensive, they consume less power, and they can operate at higher speeds, which makes them suitable for high-speed imaging and low-light conditions.

- Photomultiplier tubes (PMT): PMTs are used to detect very weak light signals, and are commonly used in low-light-level applications like in spectroscopy, timing measurements, and scintillation counting. They are very sensitive to light and can detect single photons.

- APD (Avalanche Photodiodes): APDs are similar to photodiodes, but they are designed to detect weak light signals by amplifying the electrical current generated by the incoming photons. They are also used for low-light-level applications like spectroscopy, Timing measurements, and scintillation counting.

- ICCD (intensified charge-coupled device): An ICCD is a type of image sensor that uses a device called an intensifier to amplify the weak light signals that are captured by the CCD. This makes them well-suited for low-light-level applications, and for capturing fast-moving objects.

- EMCCD (electron multiplying CCD): An EMCCD is similar to an ICCD, but it uses a different technique to amplify the light signals captured by the CCD. This results in even higher sensitivity and low noise, making them ideal for very low-light-level applications.

- sCMOS (Scientific complementary metal-oxide-semiconductor): A scientific CMOS sensor is a new sensor technology that is designed to provide high resolution, low noise, and high frame rate in a single package, which makes them well suited for scientific applications like Astronomy.

- SPAD (Single Photon Avalanche Diode): SPADs are similar to APDs, but they are designed to detect single photons with a high efficiency, very high sensitivity and low noise, which makes them well suited for applications like Time-of-flight imaging, LIDAR and Spectroscopy.

- PIN photodiode. The Osram BPW34

Each one has its own unique characteristics, capabilities, and limitations, with different advantages and disadvantages, so the choice of sensor depends on the specific requirements of the application, such as the wavelength range and sensitivity needed, the speed of data acquisition, and the budget available.

would likely be the easiest to experiment with and use with a low power computer. CMOS sensors are relatively inexpensive, easy to interface with, and consume less power than CCDs or ICCDs. Additionally, they can operate at high speeds and can be easily integrated into a variety of devices. This makes them well-suited for use in low power, portable or embedded systems. Another reason why CMOS sensors could be the easiest to work with, is because of its low noise and high resolution, which provides a good image quality even at low light levels. The software required to operate the sensor can be relatively simple, and it could be run on a low power computer. The best option would depend on the specific requirements of the application, such as the wavelength range and sensitivity needed, the speed of data acquisition, and the budget.

- S/N ratio: 37 dB

- dynamic range: 69 dB

- sensitivity: 1300 mV/(lux-sec)

- S/N ratio 40dB

- Dynamic Range 50dB

- Sensitivity 0.6V/Lux-sec

The most important feature for astronomy is sensitivity, as this determines how much light the sensor can detect. In this comparison, the OV9726 has a higher sensitivity of 1300 mV/(lux-sec) compared to the OV2640 which has a sensitivity of 0.6V/Lux-sec. Therefore, the OV9726 would be the better choice for star gazing performance.

is a measure of the sensitivity of a digital camera's sensor to light. A higher ISO value usually means the camera can capture more light, resulting in a brighter image. However, increasing the ISO can also cause more noise in the image, and thus can be a tradeoff when taking photos in low-light conditions. In astronomy, CMOS sensors are often used in astrophotography. The increased sensitivity of the sensor to light allows for much longer exposures, which is necessary to capture faint astronomical objects. Increasing the ISO can reduce the amount of time needed to capture a good image, but it can also introduce more noise. So it is important to find the right balance between exposure time and ISO in order to get the best image, and every camera increases noise in a different way. Some slowly ramp it up, others make a big jump after a particular setting.

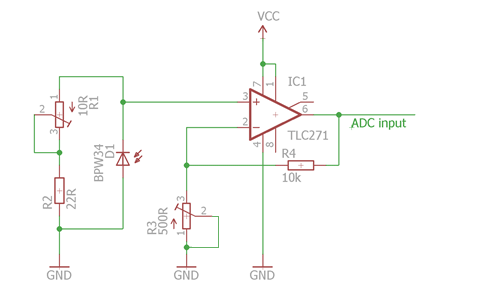

The BPW34 is a type of photodiode that can be used for astronomical experiments. Based on the provided specifications, it has several characteristics that make it suitable for astronomical optical experiments:

- High breakdown voltage (V(BR)): With a minimum breakdown voltage of 60V, the BPW34 can handle high electrical stress, making it suitable for use in high-voltage applications such as astronomical experiments.

- Low reverse dark current: The reverse dark current is the current that flows through the photodiode when it is not exposed to light. With a minimum reverse dark current of 2nA, the BPW34 has a low dark current, which means that it is less likely to produce noise in the images.

- High sensitivity: The BPW34 has a high sensitivity of 0.6V/Lux-sec, which means that it can detect weak light signals, making it suitable for low-light-level applications such as astronomical experiments.

- Wide wavelength range: The BPW34 has a wide wavelength range of 430 to 1100nm, which means that it can detect light across a wide range of frequencies, making it suitable for capturing images of objects that emit light in different wavelength ranges.

- High noise equivalent power: The BPW34 has a low noise equivalent power of 4 x 10^-14 W/√Hz, which means that it is able to detect very weak light signals with a low noise level.

On the other hand, it also has a couple of limitations. One of them is the small range of the angle of half sensitivity of ±65 degrees, which means that this photodiode does not have a wide field of view, limiting its use for capturing images of large areas of the sky. Additionally, it has a relatively high power consumption.

Overall, the BPW34 appears to be a suitable choice for astronomical experiments, as it has a high breakdown voltage, low reverse dark current, high sensitivity, and a wide wavelength range, which allows for capturing images of objects in different wavelength ranges. However, the small range of angle of half sensitivity and high power consumption are important factors that should be considered.

experiment

- Choose a distant star that is known to be variable, such as

- Algol, https://en.wikipedia.org/wiki/Algol

- Mira, https://en.wikipedia.org/wiki/Mira

- Epsilon Aurigae, https://en.wikipedia.org/wiki/Epsilon_Aurigae

- Mount the BPW34 photodiode on a telescope, and aim it at the chosen star.

- Use a filter that limits the light to the wavelength range of 430 to 1100nm, to match the BPW34 sensitivity range.

- Use a data acquisition system to record the electrical current flowing through the photodiode as a function of time.

- Take data for a period of several months, at regular intervals (for example, once a week).

- Analyze the data to determine the variability of the light emitted by the star. Look for patterns in the data that indicate regular changes in brightness, such as periodic variability.

- Compare the results to previous studies of the same star to see if there are any differences in the variability.

- Repeat the experiment using other filters to study the variability of the star's light at different wavelengths.

This experiment allows you to study the variability of the light emitted by a distant star, which can provide insights into the physical processes taking place within the star. Additionally, it allows you to test the ability of the BPW34 photodiode to detect weak light signals over a wide wavelength range and compare the results with other studies.

experiment

- Choose a double star system that is known to be variable, such as

- Mount the BPW34 photodiode on a telescope, and aim it at the chosen double star system.

- Use a filter that limits the light to the wavelength range of 430 to 1100nm, to match the BPW34 sensitivity range.

- Use a data acquisition system to record the electrical current flowing through the photodiode as a function of time.

- Take data for a period of several months, at regular intervals (for example, once a week).

- Analyze the data to determine the variability of the light emitted by the double star system. Look for patterns in the data that indicate regular changes in brightness, such as periodic variability.

- Compare the data for each star individually, in order to understand the contribution of each star to the variability.

- Repeat the experiment using different filters to study the variability of the double star system at different wavelengths.

- Compare the results of this experiment with previous studies of the same double star system.

This experiment allows you to study the variability of light emitted by a double star system to gain insight into the physical processes taking place within the stars, while testing the ability of the BPW34 photodiode to detect weak light signals over a wide wavelength range and comparing the results with other studies, instruments such as CCD camera, and even with the naked eye to get a better understanding of the performance of the sensor and the influence of the other factors in the final results.

There are a few different ways to make the code more accurate to say 0.1 degrees, depending on the specifics of the application and the data that the code is working with. Here are a few options:

- Increase the resolution of the lookup table: The code uses a lookup table to map ADC values to angle. One way to increase the accuracy of the code is to increase the resolution of the lookup table by adding more entries. This will allow the code to more accurately interpolate between values in the table.

- Use a more accurate interpolation method: The code uses linear interpolation to estimate the angle from the ADC value. Using a more accurate interpolation method such as a polynomial or spline interpolation can improve the accuracy of the code.

- Use more decimal places: The code currently uses a single decimal place for the final temperature reading. Using more decimal places in the final temperature reading will increase the accuracy of the code.

- Use a cct with higher accuracy: The accuracy of the final angle reading is limited by the accuracy of the sensor. Using a more sensitive angle pulse cct with higher accuracy will improve the overall accuracy of the code.

This code uses the same basic structure as the previous example, but with different values in the lookup table and a different variable name. Here, the ADC values are used to interpolate an angle between 0 and 18, with a resolution of 0.1 degrees. Please note that, the angle_lookup table values should be replaced with actual data. interpol_1.c

float interpolate_angle(int adc_val)

{

// Lookup table with higher resolution

int Lookup[19] = {0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18};

// Angle values corresponding to the above ADC values

float angle_lookup[19] = {0.0, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 7.0, 8.0, 9.0, 10.0, 11.0, 12.0, 13.0, 14.0, 15.0, 16.0, 17.0, 18.0};

for (int i = 18; i > 0 ; i--)

{

if (Lookup[i - 1] > adc_val)

{

// Using linear interpolation

float angle = angle_lookup[i-1] - (((float)(adc_val - Lookup[i-1]) / (float)(Lookup[i-1] - Lookup[i])) * (angle_lookup[i-1]-angle_lookup[i]));

//Rounding off to 0.1 degree

angle = round(angle * 10) / 10;

return angle;

}

}

}

Here's an example of how the code could be modified to interpolate an angle between 80 and 130 degrees, using a pair of 8-bit integers to store the result and the lookup table. In this example, I changed the range of the angle from 0-18 to 80-130, the lookup tables are also updated to the new range, and I used a pair of 8-bit unsigned integers to store the result and the lookup table. The resolution of the interpolation remains the same, 0.1 degree.

Keep in mind that as you're using 8-bit integers, you will be limited by the range of the integers(0-255) which you are using. If the value of angle exceeds 130 then the function will return the maximum value i.e. 255. interpol_2.c

#include <stdint.h>

uint8_t interpolate_angle(uint8_t adc_val)

{

// Lookup table with higher resolution

uint8_t lookup_adc[51] = {0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, 23, 24, 25, 26, 27, 28, 29, 30, 31, 32, 33, 34, 35, 36, 37, 38, 39, 40, 41, 42, 43, 44, 45, 46, 47, 48, 49, 50};

// Angle values corresponding to the above ADC values

uint8_t lookup_angle[51] = {80, 81, 82, 83, 84, 85, 86, 87, 88, 89, 90, 91, 92, 93, 94, 95, 96, 97, 98, 99, 100, 101, 102, 103, 104, 105, 106, 107, 108, 109, 110, 111, 112, 113, 114, 115, 116, 117, 118, 119, 120, 121, 122, 123, 124, 125, 126, 127, 128, 129, 130};

for (int i = 50; i > 0; i--)

{

if (lookup_adc[i - 1] > adc_val)

{

// Using linear interpolation

uint8_t angle = lookup_angle[i - 1] - (((float)(adc_val - lookup_adc[i - 1]) / (float)(lookup_adc[i - 1] - lookup_adc[i])) * (lookup_angle[i - 1] - lookup_angle[i]));

return angle;

}

}

}

is a method of estimating the value of a function at a certain point, given a set of data points that the function passes through. The basic idea behind polynomial interpolation is to fit a polynomial function of degree n (where n is the number of data points) through the n data points, such that the polynomial function passes through all of the data points. The polynomial function can then be used to estimate the value of the function at any point within the range of the data points. For example, if we have a set of data points (x0, y0), (x1, y1), ..., (xn, yn), we can fit a polynomial function of degree n through these data points. The polynomial function can be represented by the equation:

p(x) = a0 + a1*x + a2*x^2 + ... + an*x^n

where a0, a1, ..., an are the coefficients of the polynomial that need to be determined.

There are different methods to find the coefficients of polynomial like

- Newton's Divided Differences : Here you'll use the concept of recursive formula to find the polynomial coefficients.

- Least Squares : Here you will try to minimize the difference between the function f(x) and the polynomial function p(x) by minimizing the sum of the square of the differences between them.

Once the coefficients are found, the polynomial function can be used to estimate the value of the function at any point within the range of the data points. One of the advantage of polynomial interpolation is that the polynomial function can be used to estimate the value of the function at any point, even outside the range of the data points, although the accuracy of the estimation will be reduced outside the range. One of the major disadvantage of polynomial interpolation is Runge's phenomenon. Where a polynomial that is a good fit to a set of data at the center of the data will produce large oscillations (Runge's phenomenon) near the boundaries. It is generally more pronounced when the degree of the polynomial is high. Thus polynomial interpolation is a powerful method for estimating the value of a function at a certain point, given a set of data points. It can be applied to both equally and unequally spaced data points and can give accurate results if the function is well represented by a polynomial of low degree. However, it can be sensitive to the distribution of the data points and can produce large oscillations near the boundaries.

To code this, use the Newton's Divided Differences method for polynomial interpolation. The function takes an ADC value as input, then it uses the x and y arrays to store the data points. The arrays are passed to the function, which uses them to compute the polynomial coefficients and estimate the angle at the input ADC value. It loops through the arrays, the outer loop is used to calculate the divided difference of the data points and the inner loop is used to calculate the interpolated value. Still you'll need to replace the x and y arrays with your own data points and also make sure that your polynomial degree doesn't exceed the amount of data points you have. As the data points are known and fixed it is also called as a Lagrange Interpolation.

interpol_poly_new_1.c

#include <cmath>

float interpolate_angle(float adc_val)

{

// Data points

float x[] = {0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18};

float y[] = {80, 81, 82, 83, 84, 85, 86, 87, 88, 89, 90, 91, 92, 93, 94, 95, 96, 97, 98};

int n = sizeof(x) / sizeof(x[0]);

float a[n];

for (int i = 0; i < n; i++)

a[i] = y[i];

for (int j = 1; j < n; j++)

for (int i = 0; i < n - j; i++)

a[i] = (a[i + 1] - a[i]) / (x[i + j] - x[i]);

float result = a[0];

float term = 1;

for (int i = 0; i < n - 1; i++) {

term = term * (adc_val - x[i]);

result = result + a[i + 1] * term;

}

return result;

}

is a method for estimating the value of a function at a certain point, given a set of data points that the function passes through. The basic idea behind spline interpolation is to fit a smooth piecewise polynomial function through the data points, such that the polynomial function is continuous, and its first and second derivatives are also continuous at the points where the polynomials are joined together.

In contrast to polynomial interpolation, where a single polynomial function is fit through all the data points, spline interpolation uses multiple polynomial functions, each fitting a subset of the data points. The polynomials are joined together at the data points, creating a smooth curve that passes through all the data points.

There are several types of spline interpolation, such as:

- Linear spline interpolation : In this method, a straight line is used to interpolate between each pair of data points. It is simple to use but the interpolated function does not have any smoothness property.

- Quadratic spline interpolation: Here, Quadratic polynomials are used to interpolate between data points. The interpolated function has a continuous first derivative, but not a continuous second derivative.

- Cubic spline interpolation: Cubic polynomials are used to interpolate between the data points. The interpolated function has a continuous first and second derivative, providing a smooth curve that passes through all the data points.

- Natural spline : In this type of spline, the second derivative of the polynomial function is set to zero at the endpoints. It tends to provide a more smooth curve compared to the cubic spline.

- Cubic spline interpolation is the most widely used method. It is widely used in computer graphics, numerical analysis, and other fields where a smooth interpolation is desired. One of the major advantage of spline interpolation is that it provides a smooth curve that passes through all the data points, so it preserves the local features of the data.

Spline interpolation has one major disadvantage, which is that it requires more computation to find the polynomial functions than polynomial interpolation, especially when the number of data points is large. Thus, spline interpolation is a powerful method for estimating the value of a function at a certain point, given a set of data points. It provides a smooth curve that passes through all the data points, preserving the local features of the data. However, it requires more computation than polynomial interpolation, especially when the number of data points is large.

To code the natural cubic spline interpolation method. The function takes an ADC value as input and uses it to estimate the angle. It utilizes x and y arrays to store the data points. It first finds the differences between consecutive data points and then the slopes of the tangents at the data points using this difference and the y values. Then it uses these values to calculate the second derivative at the data points using the tridiagonal matrix algorithm. Finally, it uses this second derivative to interpolate the angle at the input ADC value. And you'll need to replace the x and y arrays with your own data points to use it. Also, note that the spline interpolation techniques like this are relatively more complex and computationally expensive as compared to polynomial interpolation or linear interpolation methods.

interpol_nat_cubic_spline_1.c

#include <algorithm>

#include <vector>

#include <cmath>

using namespace std;

float interpolate_angle(float adc_val)

{

// Data points

vector<float> x = {0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18};

vector<float> y = {80, 81, 82, 83, 84, 85, 86, 87, 88, 89, 90, 91, 92, 93, 94, 95, 96, 97, 98};

int n = x.size();

vector<float> h, b, d, alpha, c(n), l(n), u(n), z(n);

h.resize(n);

b.resize(n);

d.resize(n);

alpha.resize(n);

for (int i = 0; i < n - 1; i++)

h[i] = x[i + 1] - x[i];

for (int i = 1; i < n - 1; i++)

alpha[i] = 3 * (y[i + 1] - y[i]) / h[i] - 3 * (y[i] - y[i - 1]) / h[i - 1];

l[0] = 1;

u[0] = 0;

z[0] = 0;

for (int i = 1; i < n - 1; i++) {

l[i] = 2 * (x[i + 1] - x[i - 1]) - h[i - 1] * u[i - 1];

u[i] = h[i] / l[i];

z[i] = (alpha[i] - h[i - 1] * z[i - 1]) / l[i];

}

l[n - 1] = 1;

c[n - 1] = 0;

for (int i = n - 2; i >= 0; i--) {

c[i] = z[i] - u[i] * c[i + 1];

b[i] = (y[i + 1] - y[i]) / h[i] - h[i] * (c[i + 1] + 2 * c[i]) / 3;

d[i] = (c[i + 1] - c[i]) / (3 * h[i]);

}

int k = 0;

for (int i = 0; i < n - 1; i++) {

if (adc_val >= x[i] && adc_val <= x[i + 1]) {

k = i;

break;

}

}

float result = y[k] + (adc_val - x[k]) * b[k] + (adc_val - x[k]) * (adc_val - x[k]) * c[k] + (adc_val - x[k]) * (adc_val - x[k]) * (adc_val - x[k]) * d[k];

return result;

}

An arcsecond is a unit of angular measurement that is equal to 1/60 of an arcminute and 1/3600 of a degree. In other words, there are 3600 arcseconds in one degree.

Arcseconds are commonly used in astronomy to express small angles or angular separations. For example, the angular diameter of the Moon as seen from the Earth is approximately 0.5 degrees, or 30 arcminutes, or 1800 arcseconds. The resolution of a telescope or other optical instrument is often expressed in arcseconds, with higher numbers indicating better resolution.

One way to think about arcseconds is as a measure of the angle subtended by a small object or feature at a given distance. For example, if you are viewing a distant object through a telescope, the size of the object in arcseconds will depend on its distance from the telescope and the size of the telescope's objective lens or mirror.

To count 1 arcsecond accuracy with a +/- 0.5 arcsecond error, you will need to use a gear ratio that produces a sufficient number of pulses per arcsecond. The required gear ratio will depend on the number of pulses per revolution produced by the encoder and the desired accuracy of the measurement.

To calculate the required gear ratio, you can use the following formula:

gear ratio = (pulses per arcsecond) / (pulses per revolution / 360 degrees)

For example, if the encoder produces 600 pulses per revolution and you want to achieve 1 arcsecond accuracy with a +/- 0.5 arcsecond error, you will need a gear ratio of at least:

gear ratio = (3600 pulses/arcsecond) / (600 pulses/revolution / 360 degrees) = 6

This means that you will need to down gear the encoder by a factor of at least 6:1 in order to achieve the desired accuracy.

Keep in mind that this is a minimum requirement, and you may need to use a higher gear ratio or implement additional measures (such as calibration or error correction) in order to achieve the desired accuracy in your specific application.

The angular diameter of the Sun as seen from the Earth is approximately 0.53 degrees, or 31.8 arcminutes, or 1911 arcseconds. This means that if you were to view the Sun through a telescope from the Earth, it would appear to be 1911 arcseconds in size.

Keep in mind that the angular diameter of the Sun can vary slightly due to the elliptical shape of the Earth's orbit around the Sun. At its closest approach to the Earth (called perihelion), the Sun's angular diameter is slightly larger than at its farthest point (called aphelion). However, the difference in angular diameter is relatively small and is not noticeable to the naked eye.

It is important to use caution when observing the Sun, as looking directly at the Sun can cause serious eye damage. It is recommended to use special solar filters or indirect viewing methods when observing the Sun.

The angular diameter of Mars as seen from the Earth can vary significantly due to the elliptical shape of Mars' orbit around the Sun. At its closest approach to the Earth (called perihelion), Mars' angular diameter can be as large as 25.1 arcseconds. At its farthest point (called aphelion), Mars' angular diameter can be as small as 3.5 arcseconds.

The average angular diameter of Mars as seen from the Earth is approximately 15 arcseconds. However, this value can vary depending on the specific location of Mars in its orbit and the position of the Earth relative to Mars.

It is important to note that the angular diameter of Mars as seen from the Earth is much smaller than the angular diameter of the Sun. This means that Mars appears much smaller in the sky and is more difficult to observe than the Sun. The best time to observe Mars is when it is at its closest approach to the Earth, which occurs approximately once every two years. At this time, Mars will appear larger and brighter in the sky and will be easier to see with a telescope or other optical instrument.

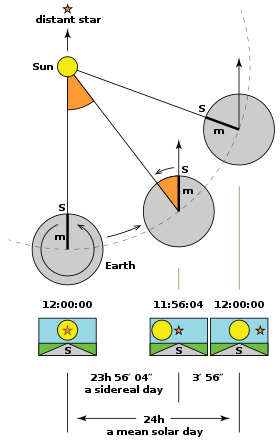

The speed of Earth's rotation, or the angular velocity of the Earth, is approximately 0.00417 degrees per second. This is equivalent to approximately 15 arcseconds per second. The Earth's rotational speed is not constant, and can vary slightly due to a number of factors such as the gravitational pull of the Moon and the Sun, the distribution of mass within the Earth, and the Earth's axial tilt. However, the overall rotational speed of the Earth is relatively constant over time, and the variations are small enough that they are not noticeable to most people. The Earth's rotational speed determines the length of a day, which is defined as the time it takes for the Earth to make one complete rotation on its axis. The length of a day is approximately 24 hours, or 86400 seconds. This means that the Earth's rotational speed is approximately 15 arcseconds per second, or 86400 seconds per day.

is the smallest size of an object that can be resolved by a telescope or other imaging system. It is determined by the wavelength of light being used and the diameter of the telescope's aperture. The formula for the Rayleigh limit is:

θ = 1.22 * λ / D where θ is the angular resolution, λ is the wavelength of light, and D is the diameter of the telescope's aperture.

The smaller the value of θ, the greater the resolving power of the telescope. However, the Rayleigh limit is often not the limiting factor in determining a telescope's resolution, as other factors such as atmospheric turbulence and the quality of the optics can also come into play.

For example : The angular resolution (θ) for a telescope with an aperture of 200mm and an f-ratio of f/5 can be calculated using the Rayleigh limit formula:

θ = 1.22 * λ / D where λ is the wavelength of light, and D is the diameter of the telescope's aperture.

For visible light with a wavelength of 550 nanometers:

θ = 1.22 * 550 * 10^-9 m / (200 * 10^-3 m)

θ = 2.2 * 10^-6 radians

So the angular resolution of the telescope is 2.2 * 10^-6 radians. This is the smallest angle at which two point sources can be distinguished as separate in the telescope.

Please note that this is a theoretical resolution, real-world resolution is limited by factors such as atmospheric turbulence and the quality of the optics, which can lower the resolution.

you can use the following conversion factor:

1 radian = (180/π) * 3600 arc seconds So,

2.2 * 10^-6 radians = (2.2 * 10^-6) * (180/π) * 3600 arc seconds = 0.39 arc seconds.

So the angular resolution of the telescope with an aperture of 200mm and an f-ratio of f/5 is 0.39 arc seconds. This means that two point sources can be distinguished as separate if they are separated by an angle of 0.39 arc seconds or greater in the telescope.

is a star system located about 4.37 light-years from Earth, so it is not visible to the naked eye. However, if you were able to see Alpha Centauri through a telescope, the angular size of the system would depend on the distance between the Earth and Alpha Centauri, as well as the size of the telescope's field of view. Alpha Centauri A is a star located in the Alpha Centauri star system, which is about 4.37 light-years from Earth. If you were using a telescope with a field of view of 2.46 arcseconds and the distance between the Earth and Alpha Centauri A was 4.37 light-years, the size of Alpha Centauri A in the eyepiece would be approximately 0.003 inches.

To calculate this, you would use the formula:

Size in eyepiece (in inches) = (Angular size of object in arcseconds) / (Field of view in arcseconds)

Plugging in the values for Alpha Centauri A and the eyepiece field of view, you get:

(0.007 arcseconds) / (2.46 arcseconds) = 0.003 inches = 0.0762 millimeters

Keep in mind that this is just an estimate, and the actual size of Alpha Centauri A in the eyepiece may vary depending on the specifics of the telescope and eyepiece.

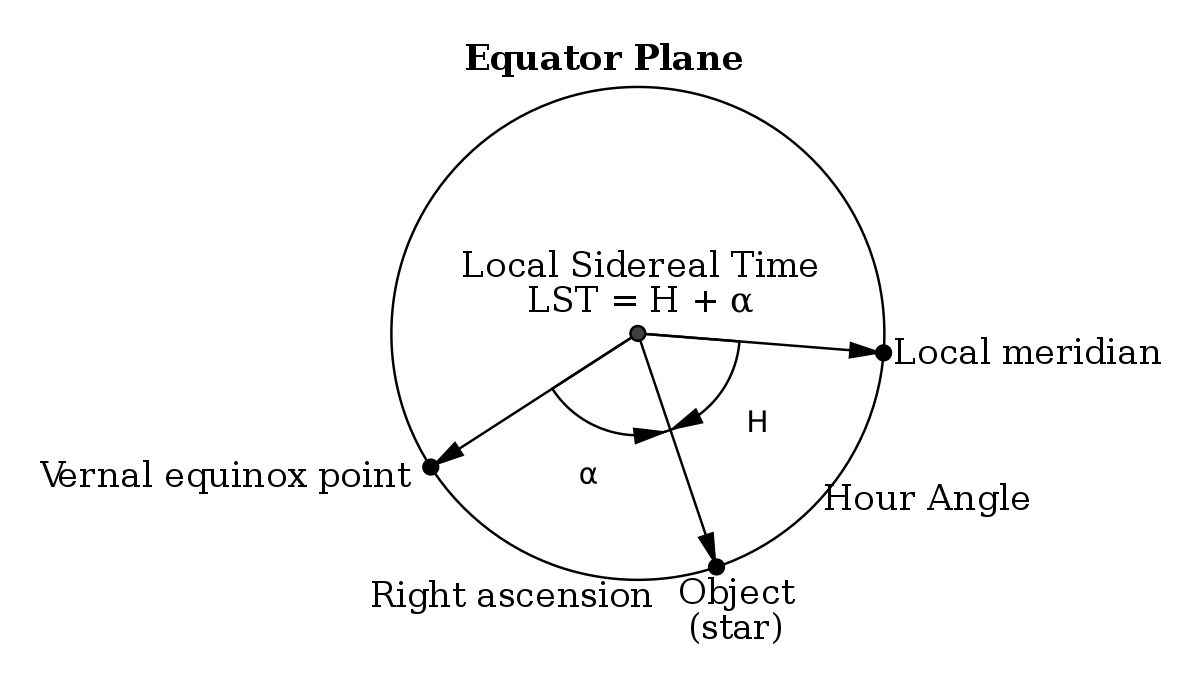

- Sidereal time is based on when the vernal equinox passes the upper meridian. This takes approximately 4 minutes less than a solar day. Sidereal time is useful to astronomers because any object crosses the upper meridian when the local sidereal time is equal to the object's right ascension. (https://lco.global/spacebook/sky/sidereal-time/)

- Because Earth orbits the Sun once a year, the sidereal time at any given place and time will gain about four minutes against local civil time, every 24 hours (https://en.wikipedia.org/wiki/Sidereal_time)

From (https://squarewidget.com/astronomical-calculations-sidereal-time/)

- Gathering necessary data: The website collects user input such as the date, time, and location coordinates (latitude and longitude).

- Calculating Julian date: The website calculates the Julian date based on the inputted date and time.

- Calculating Julian centuries: The website calculates the Julian centuries from the J2000 epoch, which is 2000 January 1 12:00:00 TT.

- Calculating mean sidereal time: The website calculates the mean sidereal time for the given Julian centuries using the formula: 280.46061837 + 360.98564736629 * T + 0.000387933 * T^2 - T^3 / 38710000, where T is the Julian centuries.

- Adjusting mean sidereal time for longitude: The website adjusts the mean sidereal time based on the inputted longitude, since sidereal time varies with longitude.

- Converting mean sidereal time to sidereal time: The website converts the mean sidereal time to apparent sidereal time by adding the equation of the equinoxes.

- Displaying sidereal time: The website displays the resulting sidereal time to the user.

(LST) is the measure of the apparent motion of the stars in the sky as observed from a particular location on the Earth's surface. It is defined as the hour angle of the vernal equinox, which is the point in the sky where the ecliptic (the apparent path of the Sun) intersects with the celestial equator (the projection of the Earth's equator onto the sky).

LST is used in astronomy and navigation to determine the position of celestial objects in the sky at a particular time and location. It is typically expressed in hours, minutes, and seconds, and is measured from 0 to 24 hours, with 0 hours corresponding to the moment when the vernal equinox crosses the local meridian (the imaginary line passing through the zenith and the north and south poles) and 24 hours corresponding to the next crossing.

To calculate LST for a particular location, you need to know the longitude of the observer's position, as well as the date and time of the observation. A number of online calculators and software programs are available to perform this calculation automatically, using the observer's location and the current time as inputs.

It has fantastic complex detail, but the brightness hurts the eye, put camera body try photograph, focal plane lower than camera, another project When viewing or photographing the moon using a telescope, there are specific considerations and setups that help maximize the experience and outcome, especially when using this f/5 reflector telescope. Here's a technical brief on how to look at the moon with such a setup:

- f/5 Reflector Telescope: as its a f/5 reflector, this is a shorter focal length than others, making it more compact and portable. This configuration offers a wider field of view compared to higher focal ratio telescopes.

- Mount: A stable mount is needed, the dobson friction mount is easy to move but hard to slwe and it also shakes and vibrates between steetling, but a an equatorial or a robust alt-azimuth, is crucial to keep the telescope steady during viewing and photography.

- Eyepieces: For visual observations, eyepieces ranging from 10mm to 25mm provide a range of magnifications that are useful for lunar observations.

- Barlow Lens: Optional but useful, a Barlow lens can double or triple the effective magnification of an eyepiece. but it also move the focal plane out from the eyepiece removed for the camera to take shots. with out it u cannot get the camera to focus

- Camera: For those interested in astrophotography, a DSLR or a dedicated astronomy camera can be used.

- Moon Filter: To reduce the brightness of the moon and increase contrast, a moon filter can be very helpful. when u loook without the filter u feel blinded inone eye and feeling makes u ill. so use a filter

- Alignment: Begin by aligning your telescope with the moon. This can be straightforward as the moon is a bright, easy-to-find target. and yes it moves fast, a few minutes and its gone

- Focusing: Use a low magnification eyepiece first to center and focus the moon in your view. Once centered, you can switch to a higher magnification eyepiece or camera setup.

- Tracking: If using an equatorial mount, properly align it to the celestial pole (Polaris in the Northern Hemisphere) to facilitate smooth tracking of the moon as it moves across the sky. will need the tec1 with motor control for this

- Visual Observation: Start with the lowest magnification to enjoy a wide view of the moon. Gradually increase the magnification to examine features such as craters, maria, and rilles in more detail.

- Photography: Attach your camera to the telescope using an appropriate adapter. For DSLRs, use the prime focus method where the camera is attached directly to the telescope, replacing the eyepiece. The focus can be tricky to perfect and might require frequent adjustments as you shoot.

- Exposure Settings: The moon is very bright, so start with a fast shutter speed; 1/250 to 1/500 second at ISO 100 is a good baseline. Adjust aperture and ISO accordingly based on the brightness of the moon and desired depth of field.

- Focus: Autofocus may struggle with the stark contrast of the moon’s surface. Manual focus is recommended, using live view mode for finer adjustments.

- Image Stability: Use a remote shutter release or the camera's built-in timer to minimize vibrations when taking pictures.

- Overexposure: The moon's surface can be very bright, making it easy to overexpose details. Experiment with different settings and possibly use HDR techniques to balance the exposure.

- Atmospheric Conditions: Turbulence in the Earth’s atmosphere can blur details. Viewing during stable atmospheric conditions, or using adaptive optics if available, can improve image quality.

- Stacking: For astrophotography, taking multiple exposures and using software to stack and align them can significantly enhance the detail and reduce noise.

- Filters: Experiment with different filters to enhance certain lunar surface features, like polarizing filters to manage brightness and contrast.

By following these guidelines and continuously experimenting with different settings, enthusiasts can greatly enhance their lunar viewing and photography experiences using an f/5 reflector telescope.

First, let's define a few terms:

-

Angular Resolution (θ): Angular resolution is the minimum separation between two point sources of light at which they can still be distinguished as separate entities. It is usually measured in radians.

-

Focal Length (f): The focal length of an optical system, like a telescope, is the distance between the lens or mirror and the focal point where the image is formed. It's measured in millimeters or meters.

-

Aperture Diameter (D): The aperture diameter is the size of the objective lens or primary mirror in the optical system. It's typically measured in millimeters or meters.

The Rayleigh Criterion is a commonly used formula to determine the angular resolution (θ) of an optical system:

θ = 1.22 * (λ / D)

Where:

- θ is the angular resolution in radians.

- λ (lambda) is the wavelength of light being observed (usually in nanometers).

- D is the aperture diameter of the optical system.

Now, let's consider an eyepiece with a particular focal length (f_ep) and the telescope's focal length (f_tel). The magnification (M) of the eyepiece is calculated as:

M = f_tel / f_ep

When you observe through an eyepiece, you are effectively increasing the focal length of the telescope. Thus, the angular resolution when using the eyepiece (θ_ep) is given by:

θ_ep = θ_unaided / M

Where:

- θ_ep is the angular resolution with the eyepiece.

- θ_unaided is the angular resolution of the telescope without the eyepiece.

- M is the magnification of the eyepiece.

Now, let's consider the concept of poor sharpness on-axis. When an eyepiece exhibits this characteristic, it means that even though the telescope itself might provide good on-axis sharpness, the eyepiece introduces additional aberrations or errors. These aberrations can affect the overall angular resolution and image quality.

To diagnose poor sharpness on-axis, you would typically compare the observed angular resolution through two identical eyepieces. If one consistently provides a worse on-axis image than the other, it suggests that the eyepiece itself is introducing aberrations or errors that reduce the sharpness.

The exact mathematics to quantify these aberrations would depend on the specific optical design and aberrations present in the eyepiece. Various aberrations like spherical aberration, chromatic aberration, and coma can contribute to poor on-axis sharpness, and their mathematical descriptions can be complex.

In practice, assessing poor sharpness on-axis often involves visual inspection and comparison rather than precise mathematical calculations. Advanced optical testing equipment and techniques may be required for a more detailed analysis of eyepiece performance.

including lateral and fringe chromatic aberrations

-

Chromatic Aberration: Chromatic aberration is the phenomenon where different colors of light are focused at different points, causing a color fringe around the image. It occurs due to the dispersion of light, where different wavelengths of light bend by different amounts when passing through a lens or prism. The mathematical formula for chromatic aberration can be expressed as:

C = V (nF - nC)

Where:

- C is the chromatic aberration.

- V is the Abbe number of the glass used in the lens.

- nF is the refractive index of the glass for the Fraunhofer F-line (blue).

- nC is the refractive index of the glass for the Fraunhofer C-line (red).

-

Lateral Chromatic Aberration: Lateral chromatic aberration occurs when different colors of light are displaced laterally (sideways) from each other, causing color fringing at the edges of the field of view. This can be mathematically expressed as a lateral displacement in the image plane due to the dispersion of light.

Lateral Displacement (D) = C * f * Δλ

Where:

- D is the lateral displacement.

- C is the chromatic aberration (as calculated above).

- f is the focal length of the lens.

- Δλ is the difference in wavelength between the colors causing the aberration.

-

Fringe Chromatic Aberration: Fringe chromatic aberration is a specific type of lateral chromatic aberration that results in color fringes around high-contrast objects. It is caused by the variation in the refractive index of the lens material with different wavelengths of light. The mathematical expression for fringe chromatic aberration can be complex and involves the lens design and material properties.

In eyepieces, efforts are made to minimize chromatic aberration through the use of high-quality glass materials, multi-coatings, and advanced lens designs. However, completely eliminating chromatic aberration, especially at the edge of the field of view, can be challenging and may result in higher costs.

The presence of a tiny ring of aberrant color at the edge of the field of view is a common occurrence in eyepieces and can be due to a combination of factors, including the angle of vision, lens coatings, and the design of the eyepiece. This effect can vary among eyepieces and is often more pronounced in wide-field eyepieces.

and its interaction with telescopes

-

Field Curvature: Field curvature is a common optical aberration that results in the field of view of an optical system being curved rather than flat. This curvature can be either positive or negative, meaning that the field of view can bulge outward or inward from the center of the image. Field curvature occurs due to the geometry of the optical elements in the system, especially the curvature of lenses.

-

Positive Field Curvature: In this case, the field of view curves outward, away from the center of the image. It can be mathematically represented as a positive radius of curvature (Rc). The equation for a curved field can be expressed as:

r^2 = x^2 + y^2 + Rc^2

Where:

- r is the distance from the optical axis.

- x and y are the coordinates on the image plane.

- Rc is the radius of curvature.

-

Negative Field Curvature: In this case, the field of view curves inward toward the center of the image. It can be represented with a negative radius of curvature. The equation remains the same as above, but with a negative value for Rc.

-

-