[Azure Developer]使用REST API获取Activity Logs、传入Data Lake的数据格式问题 - LuBu0505/My-Code GitHub Wiki

问题一:. 如何在用REST API获取活动日志时,控制输出的项?

【答】参考REST API对于获取活动日志的说明接口,在参数是$filter和$select中可以分别控制过滤条件和输出项

GET https://management.chinacloudapi.cn/subscriptions/{subscriptionId}/providers/microsoft.insights/eventtypes/management/values?api-version=2015-04-01&$filter={$filter}&$select={$select}

注:management.chinacloudapi.cn为中国区Azure的管理终结点,management.azure.com为全球Azure的管理终结点

$filter

Reduces the set of data collected.This argument is required and it also requires at least the start date/time.

The $filter argument is very restricted and allows only the following patterns.

- List events for a resource group: $filter=eventTimestamp ge '2014-07-16T04:36:37.6407898Z' and eventTimestamp le '2014-07-20T04:36:37.6407898Z' and resourceGroupName eq 'resourceGroupName'.

- List events for resource: $filter=eventTimestamp ge '2014-07-16T04:36:37.6407898Z' and eventTimestamp le '2014-07-20T04:36:37.6407898Z' and resourceUri eq 'resourceURI'.

- List events for a subscription in a time range: $filter=eventTimestamp ge '2014-07-16T04:36:37.6407898Z' and eventTimestamp le '2014-07-20T04:36:37.6407898Z'.

- List events for a resource provider: $filter=eventTimestamp ge '2014-07-16T04:36:37.6407898Z' and eventTimestamp le '2014-07-20T04:36:37.6407898Z' and resourceProvider eq 'resourceProviderName'.

- List events for a correlation Id: $filter=eventTimestamp ge '2014-07-16T04:36:37.6407898Z' and eventTimestamp le '2014-07-20T04:36:37.6407898Z' and correlationId eq 'correlationID'. NOTE: No other syntax is allowed.

$select

Used to fetch events with only the given properties.The $select argument is a comma separated list of property names to be returned.

Possible values are: authorization, claims, correlationId, description, eventDataId, eventName, eventTimestamp, httpRequest, level, operationId, operationName, properties, resourceGroupName, resourceProviderName, resourceId, status, submissionTimestamp, subStatus, subscriptionId

问题二:是否可以往Data Lake中注入Json格式文件?

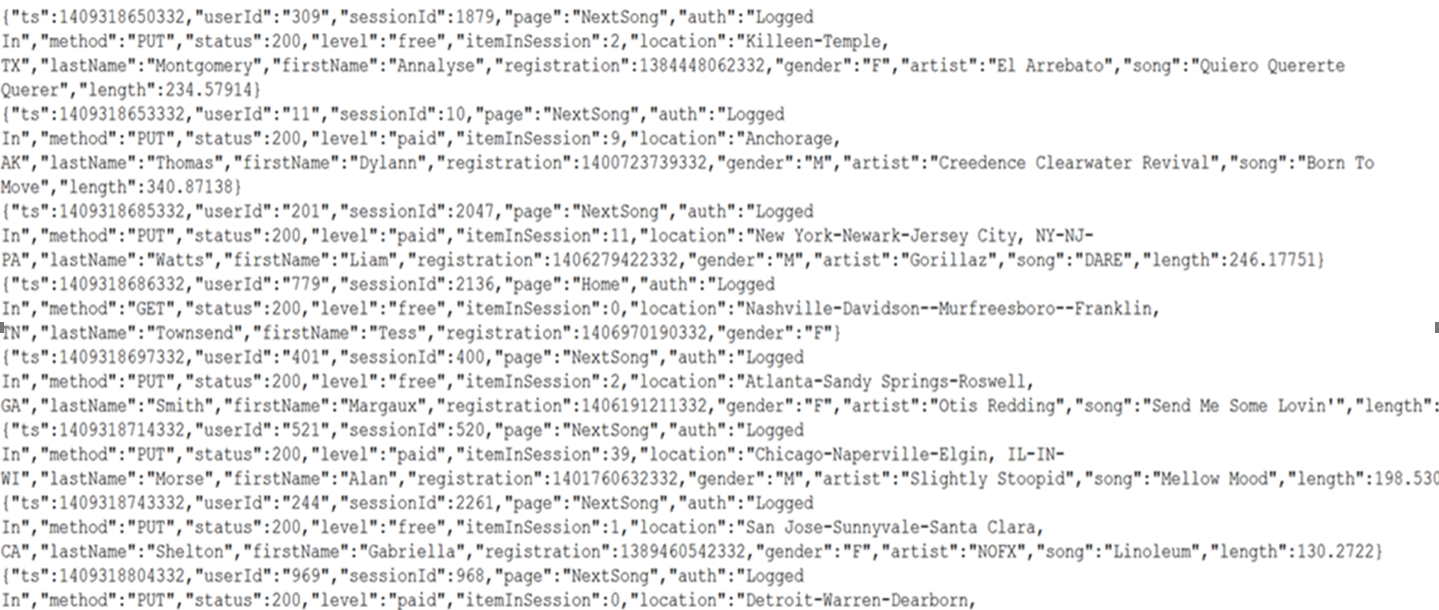

【答】根据官方文档示例,如果使用Databricks进行(SQL查询语句)进行数据分析,是支持Json格式的数据,示例中使用的Json格式如下图所示:

参考使用 Databricks 分析数据一文: https://docs.azure.cn/zh-cn/storage/blobs/data-lake-storage-quickstart-create-databricks-account#ingest-sample-data

问题三:如何用C#向Data Lake中插入数据文件? 【答】可以,需要先安装 Azure.Storage.Files.DataLake NuGet 包。然后添加如下引用

using Azure.Storage.Files.DataLake;

using Azure.Storage.Files.DataLake.Models;

using Azure.Storage;

using System.IO;

using Azure;

将文件上传到目录,则参考以下代码即可:

- 通过创建 DataLakeFileClient 类的实例,在目标目录中创建文件引用。

- 通过调用 DataLakeFileClient.AppendAsync 方法上传文件。

- 确保通过调用 DataLakeFileClient.FlushAsync 方法完成上传。

public async Task UploadFile(DataLakeFileSystemClient fileSystemClient)

{

DataLakeDirectoryClient directoryClient =

fileSystemClient.GetDirectoryClient("my-directory");

DataLakeFileClient fileClient = await directoryClient.CreateFileAsync("uploaded-file.txt");

FileStream fileStream =

File.OpenRead("C:\\file-to-upload.txt");

long fileSize = fileStream.Length;

await fileClient.AppendAsync(fileStream, offset: 0);

await fileClient.FlushAsync(position: fileSize);

}

完整实例参考使用 .NET 管理 Azure Data Lake Storage Gen2:https://docs.azure.cn/zh-cn/storage/blobs/data-lake-storage-directory-file-acl-dotnet#upload-a-file-to-a-directory