SOLR Admin Tasks - AtlasOfLivingAustralia/documentation GitHub Wiki

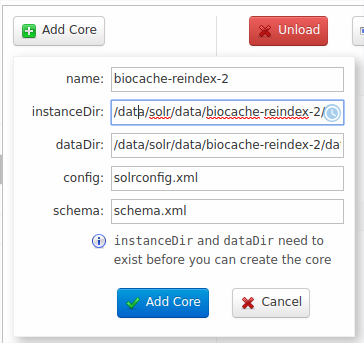

Creating a new SOLR core

Do some command line steps:

# First create the directories for the new core

sudo mkdir -p /data/solr/data/biocache-reindex-2/data

# Copy the previous solrconfig, schema there

sudo cp -a /data/solr/data/biocache/conf/ /data/solr/data/biocache-reindex-2/conf

# Set the right perms

sudo chown -R solr:solr /data/solr/data/biocache-reindex-2/

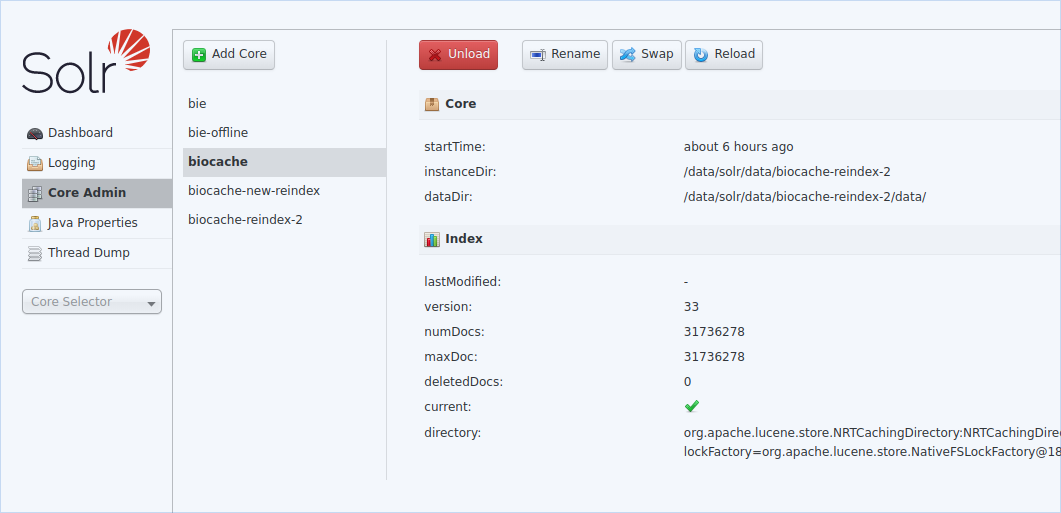

Later you can add the core via the SOLR UI interface:

Full biocache-store indexing

Now we'll do a copy of the index on the filesystem.

Steps before re-indexing

Remove previous re-index:

echo 'Removing old directories first.'

# We do several rm (to prevent "Argument list too long")

rm -rf /data/solr/solr-create/biocache/data0*

rm -rf /data/solr/solr-create/biocache/data1*

rm -rf /data/solr/solr-create/biocache/data*

rm -rf /data/solr/merged_*

echo 'Removing old config so indexing fetches current config from SOLR Cloud'

# This is important when adding new fields, like layers, etc

# https://github.com/AtlasOfLivingAustralia/biocache-store/issues/315

rm -rf /data/solr/biocache/conf

# Deleting a similar directory to check where it is being corrupted

rm -rf /data/solr/solr-create/biocache/conf

FIXME: Should biocache-config.properties point to the new core or the previous one during the local reindex?:

solr.home=http://index.gbif.es:8983/solr/biocache

# solr.home=http://index.gbif.es:8983/solr/biocache-reindex-2

Re-index

Now we can start the re-index:

#

# biocache index-local-node options:

#

# -t threads

# -ms, mergesegments, The number of output segments. No merge output is produced when 0.

# -wc, writercount, The number of index writers.

# -wt, writerthreads, The number of threads for each indexing writer. There is 1 writer for each -t.

# -wb, writerbuffer, Size of indexing write buffer. The default is + writerBufferSize, {

# -pt, processthreads, The number of threads for each indexing process. There is 1 process for each -t.

# -pb, processbuffer, Size of the indexing process buffer.

# -r, writerram, Ram allocation for each writer

# -ws, writersegmentsize, Maximum number of occurrences in a writer segment. There is 1 writer for each -t.

# -ps, pagesize, The page size for the records.

# -max, maxrecords, Maximum number of records to index. This is mainly for testing new indexing.

# basic text index

biocache index-local-node -t 4 -max 1000

# With more options, sample from: https://github.com/AtlasOfLivingAustralia/biocache-store/issues/329

biocache index-local-node -t 8 -pt 8 -wc 2 -wt 2 -r 1024 -ps 500 -pb 500 -wb 500 -ws 100000000 -max 50000

# or a Full re-index

biocache index-local-node -t 4 -max -1

You can find detailed info about reindex tunning options in the code.

Manual copy

The previous generated index needs to be manually copied into a remote SOLR (in this example).

rsync --delete -aH --rsync-path "sudo rsync" /data/solr/merged_0/ 172.16.16.200:/data/solr/data/biocache-reindex-2/data/index/

ssh 172.16.16.200 "sudo chown -R solr:solr /data/solr/data/biocache-reindex-2/data/index/"

...or into local SOLR:

sudo rm -rf /data/solr/data/biocache-reindex-2/data/index

sudo cp -r /data/solr/merged_0 /data/solr/data/biocache-reindex-2/data/index

sudo chown solr:solr /data/solr/data/biocache-reindex-2/data/index

When complete you should see files with names like _13l.cfe, _13l.cfs, _13l.si in /data/solr/data/biocache-reindex-2/data/index.

Take into account new fields in the schema

When you have added some fields (like new spatial layers), some extras steps should be done to be shown that new fields in the index, to update the schema:

cd /data/solr/data/biocache-reindex-2/conf

# copy the generated schema in the reindex in the conf directory

cp ../data/index/conf/schema.xml schema.xml

# remove the managed-schema so schema.xml is used after core reload.

rm managed-schema

and reload the core to get the new (layers) fields working.

Swapping cores

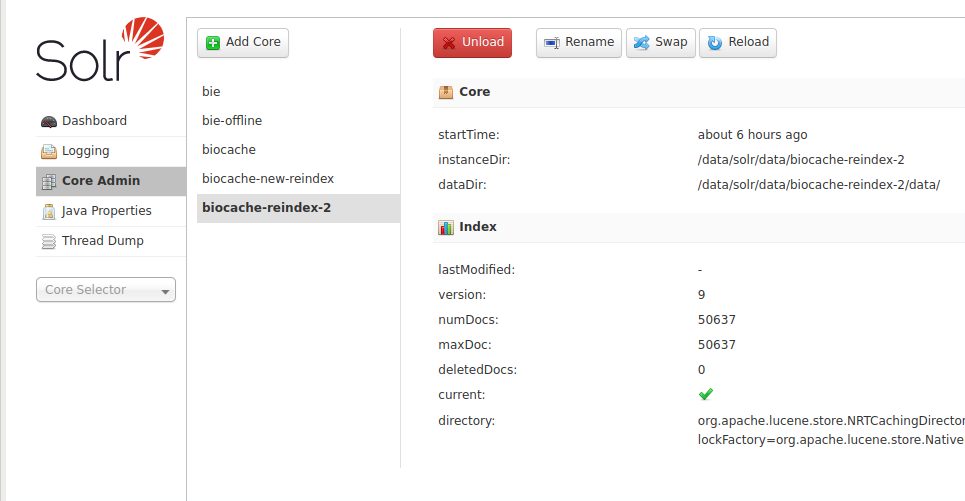

When your data is copied, you can "Reload" the core to read the new index,

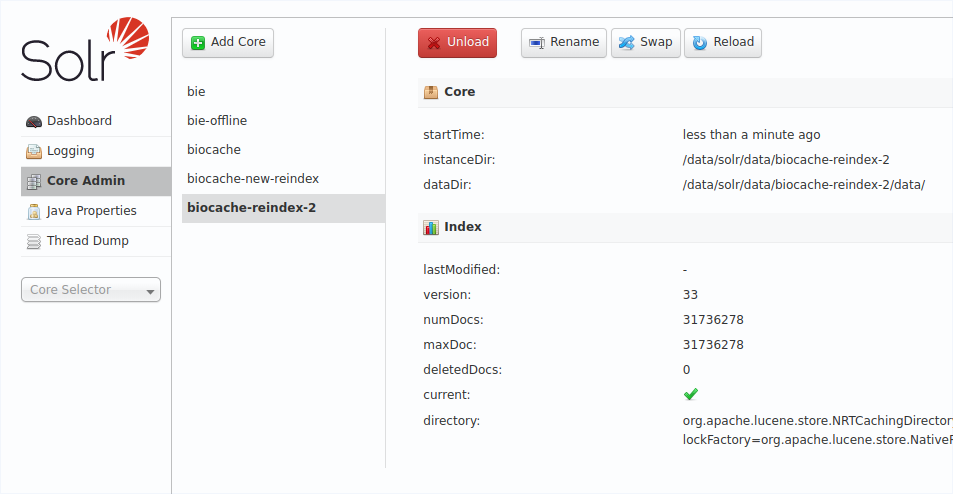

See the difference of number of docs:

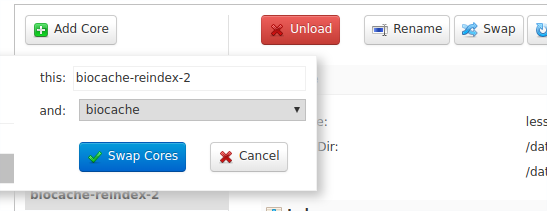

you can do some queries in the SOLR UI to see it's all ok, and when ready, you can swap the cores to put the core in production:

After swapping now biocache queries will use your new index:

This core swapping is also useful to put in production the BIE Offline after a reindex.